Arc Graphics Updates And PresentMon Tested: Intel Makes Huge Strides

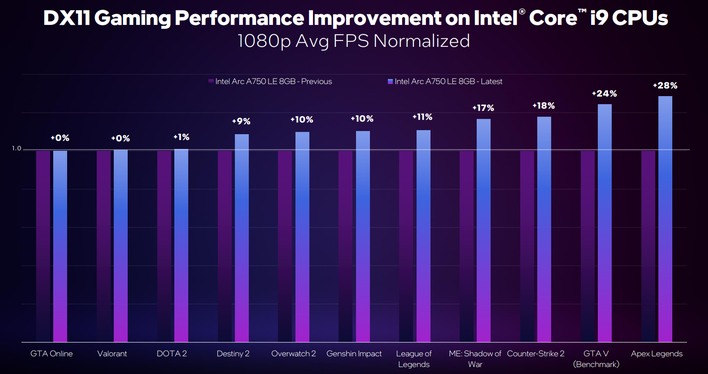

The latest driver update, released this past Wednesday, is simply known as version 4644. Intel claims that this latest driver achieves an average performance uplift of between 12 and 19% in DirectX 11 games (depending on the host CPU), which we'll discuss in a bit. This update also brings along a new metric that Intel has devised to help gamers determine whether their performance issues in games are due to their GPU or the rest of their system.

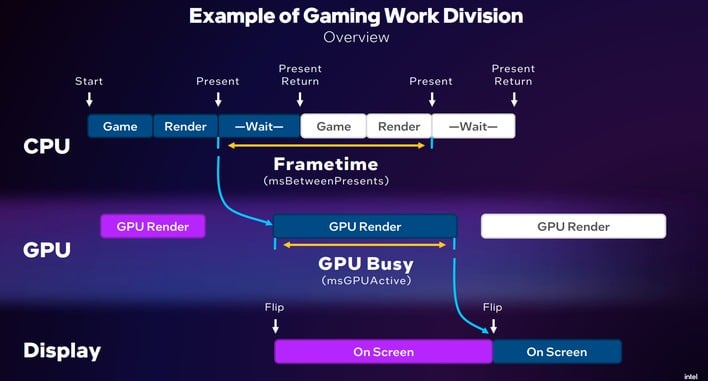

Generally speaking, you want video games to be bottlenecked by the graphics processor. The reason for this is that it is the final step in the render process, and it has the most control over when frames are presented to the display. We call this condition being "GPU-limited". When performance is being held up by some other part of your system, we usually call it being "CPU-limited" even though it may not necessarily have anything to do with the CPU itself; often enough, "CPU limited" games are actually struggling with main memory latency, system I/O, thread contention, or other issues.

GPU-Busy And Intel's New PresentMon Beta Tool

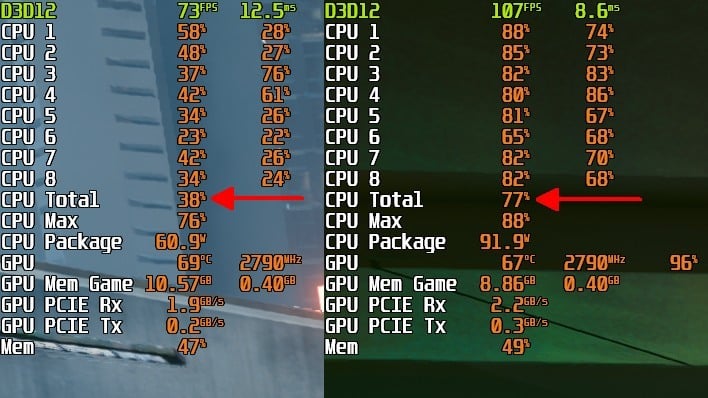

Traditionally, we detect CPU-limited games by looking at CPU and GPU usage. When the frame rate is low and GPU usage is also low, we can typically assume that the game is suffering under some other kind of limitation. However, this can be an inexact science. Intel wants to remove all uncertainty by introducing a new metric called "GPU Busy." It's measured in milliseconds, and the intention is to compare it directly against frametimes, or more specifically "time between presents", to see how much of the render time was spent on the GPU.

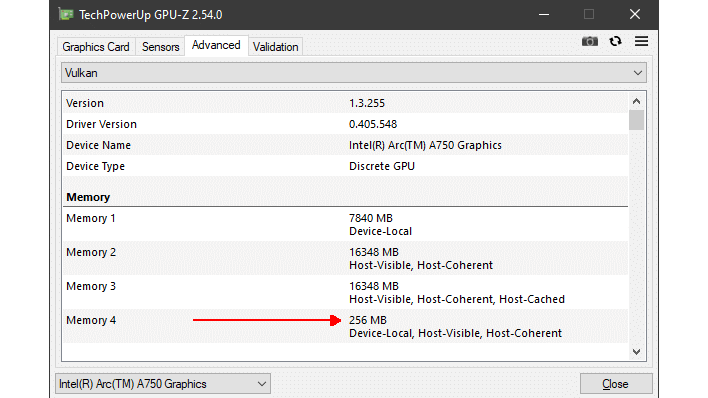

If only a small portion of a given frame was spent on the GPU Render part of the process, then it's very likely that your performance is being held up by something else. This can be down to a game's settings, and it will vary drastically between software and hardware configurations. Someone who slaps a modern graphics card into their old Core i7-4790K desktop is going to miss out on the overwhelming majority of the performance of their new graphics card (especially if it's an Arc, considering those GPUs really need resizable BAR support for best performance.) Intel's own Tom "TAP" Petersen and Ryan Shrout discuss GPU busy and additional Arc updates here...

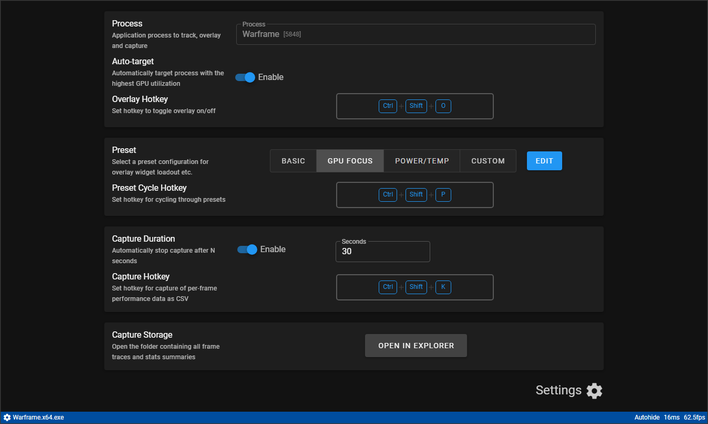

Intel devised this metric so that gamers can use it to optimize their settings. If you're getting bad or inconsistent performance in a game, you can use Intel's new customized PresentMon tool to profile your game's performance and see what percentage of each frame is spent on your GPU.

Of course, Intel didn't do this simply out of the goodness of its heart. The company says that its new driver drastically improves the efficiency of CPU operations in games, such that frametimes, particularly in DirectX 11 and DirectX 9 titles, become both more consistent and overall lower. That means smoother performance in general, even if the actual 'average FPS' hasn't gone up that much.

Intel's idea of measuring GPU time versus CPU time isn't exactly a new one. You've surely run game benchmarks yourself that outline "GPU FPS" versus "CPU FPS," as this kind of thing has become more common. Titles like Shadow of the Tomb Raider, Forza Horizon 5, and Gears 5 (just to name a few) all have detailed breakdowns of the CPU and GPU performance impact of your settings as part of their benchmark tools.

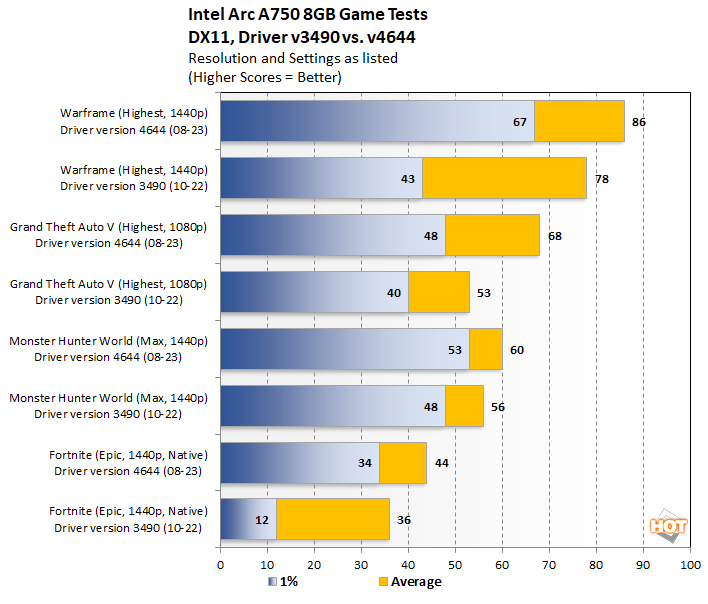

Real Game Testing: Verifying Intel's Numbers

Intel has also made some pretty big claims about performance gains across the board, but it's important to keep in mind that the company is comparing its progress against the original public Arc driver, version 3490 released way back in October. It's more of a fair comparison than you might think, because most reviews of the Arc GPUs were done around the release of the cards, and so they used that driver, or a pre-release driver that was slightly older.We've tested a handful of games on an Arc A750 graphics card using first the original v3490 driver from last year, and then afterward, the just-released v4644 driver. Unfortunately, if we needed to, there is no way to go back to the old driver and re-test as it was, because the v4644 driver includes a mandatory firmware update. This firmware update does seem to have corrected a persistent issue where Arc GPUs would fail to initialize correctly on a warm boot, so that's good.

Given that Intel's focus for this driver was on DirectX 11 performance, we've emphasized those results in our testing, but we've also tested a half-dozen other games using other APIs to see if there were any notable changes. First, let's take a look at the DirectX 11 results:

Results vary, but these are some pretty decent upgrades, especially in terms of consistency. Fortnite goes from nearly-unplayable to reasonably smooth, and Warframe also saw a tremendous improvement in frametime stability. GTA V, likewise, sees a nice bump in performance; don't read too much into the larger gap between 1% and Average because we were doing a custom test in GTA Online and the six results we collected (three with the old driver, three with the new) varied pretty widely, as is the usual nature of testing with online games.

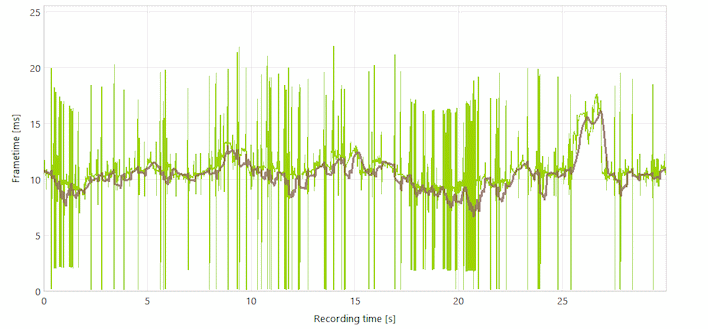

Warframe was one of the best results we got from the new driver. An uplift from 78 to 86 average FPS isn't bad in its own right for just a driver change—that's a 12% gain—but the real news here is the improvement in framerate stability. Warframe is kind of a stuttery game to begin with, even on AMD and NVIDIA hardware, but with the old driver it was practically unplayable, with massive stutters up to 2 seconds in length. With the new driver, it's as smooth as it gets in 1440p, or even in 4K if you make use of the game's FSR 2.2 upscaling.

Notably, Warframe actually has a DirectX 12 renderer, marked as (Beta) in the launcher. This seems to largely be a D3D11on12 hack and historically has not offered improved performance on other GPUs. Well, it doesn't here, either. In our testing, DX12 in Warframe is both less stable (frametime-wise) and also slower than DX11 on every GPU, so don't bother with it, even for Arc.

Intel claims a 27% improvement in GTA Online performance. Our measured bump was actually a bit better at 28%, which ain't bad at all. We did see a smaller gain in 1% performance, but as noted above, there was considerable variance between our runs in GTA Online, and we wouldn't worry too much about this. The result is great, regardless, as GTA Online can still be a surprisingly heavy game in 2023.

We tested Monster Hunter World because it's still one of the more-demanding DirectX 11 games, and the gains were pretty modest, but also surprisingly consistent across runs. MH World also features some of the most-consistent frametimes in our testing. It runs well on the Arc A750 at 1440p, and with the new driver, the DirectX 12 mode offers very-slightly-improved performance over even these numbers. Just don't try it on the old driver; it gave us "blue screen of death" errors related to video scheduling twice.

On the other hand, DirectX 12 mode couldn't even be toggled in Fortnite using the older driver. Even in DirectX 11 mode, using the game's recommended settings—which set almost everything to "Epic", the highest value—the game was nearly unplayable.

Swapping over to DirectX 12 mode and enabling Fortnite's Unreal Engine 5 Lumen and Nanite features absolutely brutalized performance, with averages dropping to the low 20s and 1% numbers in the single digits. Suffice to say that an Arc A750 isn't ready for Unreal Engine 5, at least not in 1440p.

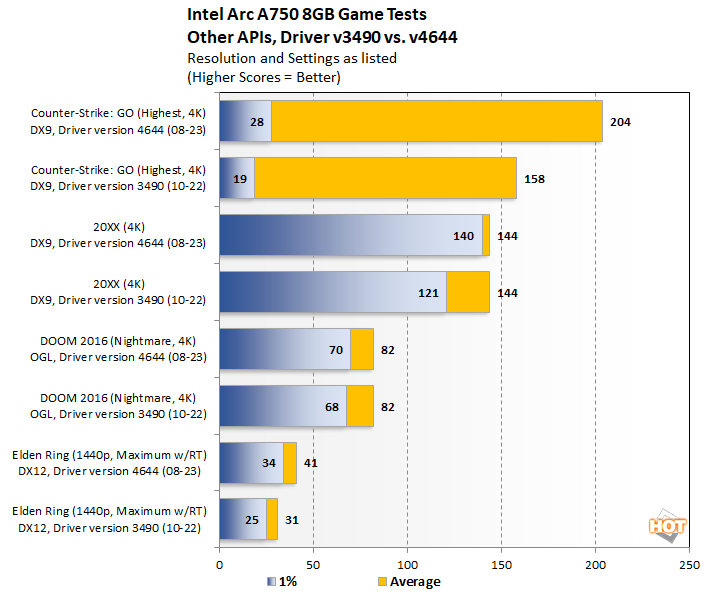

Outside of our DirectX 11 game tests, we also tested a couple of games each for DirectX 9, OpenGL, Vulkan, and DirectX 12.

Arc's performance in the most popular game on Steam was a big story around the launch of the GPUs. Even though the cards were putting out hundreds of frames per second, it was hundreds less than competing cards, so Intel put some game-specific effort into improving CS:GO. Did it help? Sure.

We're testing using a map made specifically for stress-testing performance in CS:GO, and even in 4K with the in-game settings completely maxed-out, the A750 managed 158 average FPS. Keep in mind that the benchmark includes a section where the player walks directly through multiple smoke grenades, and that's why the 1% lows are relatively poor. The new driver nearly doubles the 1% lows and improves the average by 29%, which is quite good.

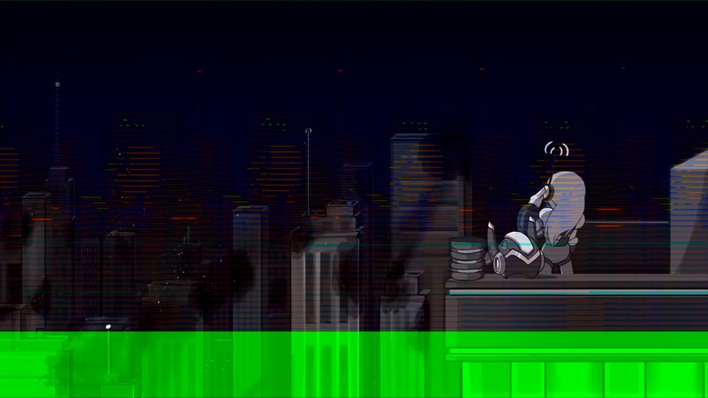

Most players would probably never notice the performance difference between the old driver and the new, but they certainly would notice the image quality difference. The old driver had significant visual bugs in 20XX, and it also caused long load times for whatever reason. 20XX is a game that loads in a couple of seconds on NVIDIA and AMD hardware, and on the new driver, that's largely resolved for Arc, too, along with the aforementioned visual bugs getting fixed.

You might think that on an Arc GPU, the better option would be to play in Vulkan mode, and if you only look at average FPS, you'd be right. In Vulkan mode, on both the old and new drivers, Doom (2016) offers a higher average FPS than in OpenGL mode. However, it also offers drastically worse 1% lows. This may be due to the critical Resizable BAR feature apparently not working in Vulkan on Arc when run on AMD platforms; more on this below.

Much has been made of Arc's capable ray-tracing performance. We wanted to test that too, so we loaded up one of our favorite titles of the last decade, Elden Ring, and benchmarked it in the game's starting area of Limgrave, near the Church of Elleh at daybreak. The performance gains from the new driver are clear to see, and since this game was designed with 30 FPS gameplay in mind, we'd say it runs just fine.

You can optionally turn the resolution down to 1080p to run up against the 60 FPS cap, or you can disable ray-tracing altogether to achieve the same in 1440p, but we don't really recommend it; the RTAO implementation has a big effect on immersion. With that said, while the RT effects are turned off, the Arc A750 can even run Elden Ring smoothly in 4K resolution with maximum settings, and that ain't bad.

It's not on our chart above because it's not really playable, but we also tested Cyberpunk 2077's path-traced "Overdrive" mode. Spoilers: it doesn't work. Well, it does work, actually, just fine, even on the old driver. However, whether old or new driver, the performance caps out in the mid-teens even if you reduce the resolution as far as 960⨯540. Cyberpunk 2077 runs just fine on Arc without path-tracing, but hopefully Intel can take a look at this title and get the real-time ray-tracing working better than it does.

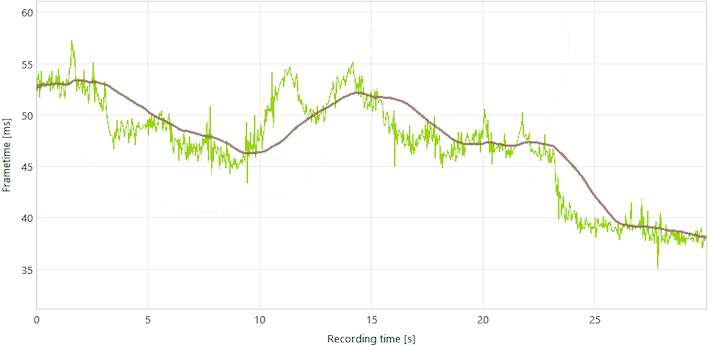

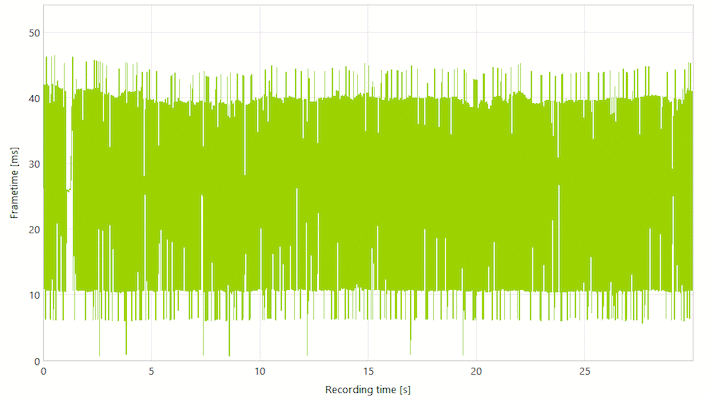

We also tested Quake II RTX. Other outlets have reported good results with this modified title on Intel's GPUs, but our experience wasn't great. Check out this frame time graph:

Doing some investigation, it looks like resizable BAR is not enabled specifically for Vulkan on our test system, which is based on a Ryzen 7 5800X3D CPU in an ASRock X570 Taichi motherboard. We researched the issue, and found a thread where some folks described the same behavior not only on AMD systems, but also on Intel machines where the CPU's integrated graphics were disabled. It's a curious bug, and makes Arc considerably less attractive for folks with AMD systems. Hopefully Intel can get this one worked out.

Meanwhile, ZDoom runs rather poorly in OpenGL, with the infamous "Transcendence" benchmark struggling to reach just 26 FPS. Swapping over to Vulkan greatly improves that to around 38 FPS, but unfortunately, the new driver made no real difference in these numbers. 38 FPS is actually a good result for an entry-level GPU in this benchmark, so we'll give it a pass.

Intel's Latest Arc Updates: The Final Word

With Intel charging just $250 US for an Arc A750, it becomes hard to recommend anything else at that price point. It's true that you can spend another hundred dollars to get a much faster GPU in the Radeon RX 6700 XT, but not everyone has an extra hundred dollars to spend—and that card will be both larger and much thirstier than the A750.

In our testing, we really haven't run into any showstopper issues with games—even obscure ones, including emulators—on the 4644 driver, so we'd say it's probably safe to recommend Arc to most gamers at this point, which isn't something that coudln't necessarily be said when the GPUs launched late last year. Here's to Intel's driver team for making massive strides in recent months, and we're looking ahead with hopeful eyes at the company's second generation of graphics processors that loom on the horizon.