How Intel Plans To Enable Path Tracing On Integrated Graphics

Two of the papers that Intel will be presenting are specifically about how to improve the efficiency of path tracing when concerned with dynamic objects and complex geometry—two things that are extremely common in video games. One of them "presents a novel and efficient method to compute the reflection of a GGX microfacet surface," which Intel describes as the "de facto standard material model" for games.

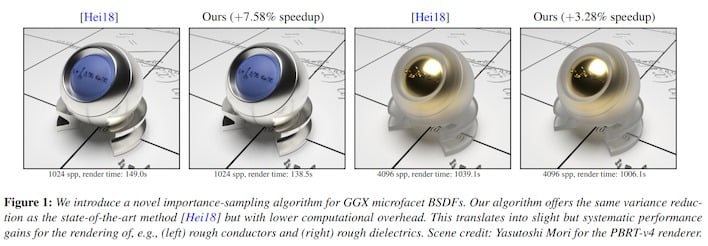

To cut to the chase, this method takes into account certain safe assumptions when doing light scattering calculations from reflective surfaces to drastically improve the performance of "BSDF sampling." How much? By as much as 2.55x the previous state-of-the-art. Don't get too excited, though; this BSDF sampling is typically only 2-4% of the render time of a path-traced image, so cutting it down to 0.5-1.5% is ultimately a small gain in render performance. It's still a nice improvement, though, and scenes with lots of reflective surfaces will gain more.

Speaking of reflective surfaces, another one of Intel's papers describes a new method for rendering "glinty" surfaces—things with a sparkly appearance such as certain car paints snow, or anything with "glints" of reflectivity. These kinds of materials don't really appear in games very often because they're extremely difficult to render, but Intel's new method combines a novel counting method with "anisotropic parameterization of the texture space" to achieve a speedup between 1.5x and 5x when rendering these kinds of materials.

Two other papers for the Eurographics Symposium on Rendering (EGSR) talk about accurately simulating the reflectance and transmittance of light through translucent materials, as well as a new method of more-efficiently sampling photon trajectories in "difficult illumination scenarios." The latter paper compares its method directly against NVIDIA's ReSTIR method, and claims that it offers superior quality. There's a hack of Quakespasm that implements this method for ray-tracing, but we weren't able to get it working by press time.

Intel says that it will have a talk during SIGGRAPH's Advances in Real-time Rendering course titled "Path-Tracing A Trillion Triangles" that demonstrates the benefits of algorithmic advancements in path-tracing. The company also says that real-time path tracing "can be practical even on mid-range and integrated GPUs in the future." Excitingly, Intel promises that all of its research will be made open-source "in the spirit of Intel's open ecosystem software mindset."