NVIDIA Titan RTX Review: A Pro Viz, Compute, And Gaming Beast

We also spent a little time overclocking the NVIDIA Titan RTX, to see what kind of additional frequency headroom it had available. Before we get to our results, though, we should quickly re-cap Turing's new GPU Boost algorithm and cover some new overclocking related features.

Overclocking NVIDIA Turing GPUs

Turing-based GeForce cards, feature GPU Boost 4.0. Like previous-gen GeForces, GPU Boost scales frequencies and voltages upwards, power and temperature permitting, based on the GPU's workload at the time. Should a temperature or power limit be reached, however, GPU Boost 4.0 will only drop down to the previous boost frequency/voltage stepping -- and not the base frequency -- in an attempt to bring power and temperatures down gradually. Whereas GPU Boost 3.0 could result in a sharp drop-off down to the base frequency when constrained, GPU Boost 4.0 is more granular and should allow for higher average frequencies over time. Now, the Titan RTX isn't branded a GeForce, but things behave the same way.

As we've mentioned in our previous coverage of the Turing architecture, there are beefier VRMs on Turing-based cards versus their predecessors, which should help with extreme overclocking, though most of the cards are still being power limited to prevent damage and ensure their longevity.

With the launch of Turing, NVIDIA also tried to make the overclocking process easier by introducing a new Scanner tool and API, which is available on the Titan RTX. The NVIDIA Scanner is supposed to be a one-click overclocking tool with an intelligent testing algorithm and specialized workload designed to help users find the maximum, stable overclock on their particular cards without having to resort to trial and error. The NVIDIA Scanner will try higher and higher frequencies at a given voltage step and test for stability with a specialized workload along the way. The entire process should take around 20 minutes if it works, but when it’s done, the Scanner will have found the maximum stable overclock throughout the entire frequency and voltage curve for a given card.

As we've mentioned in a few article, we have had a 0% success rate with the scanner tool across multiple test beds (and Windows installs, and driver revisions, and Precision X1 revisions), so we couldn't properly test the auto-scan feature. It simply doesn't work for us -- hopefully you all have better luck out in the wild.

In lieu of using the NVIDIA Scanner, we kept things simple, and used the frequency offset and temperature target sliders to manually overclock the Titan RTX. First we cranked up the temperature target, then we bumped up the GPU and memory clocks until the test system was no longer stable or showed on-screen artifacts.

In the end, our card had no trouble breaking the 2GHz barrier, but at that point we were bumping into the power limit. Still, we saw a decent bump in performance while overclocked, that allowed the Titan RTX to extend its lead over the GeForce GTX 2080 Ti, especially in the 4K Middle Earth benchmark where the Titan beat the stock 2080 Ti by over 18%.

|

|

|

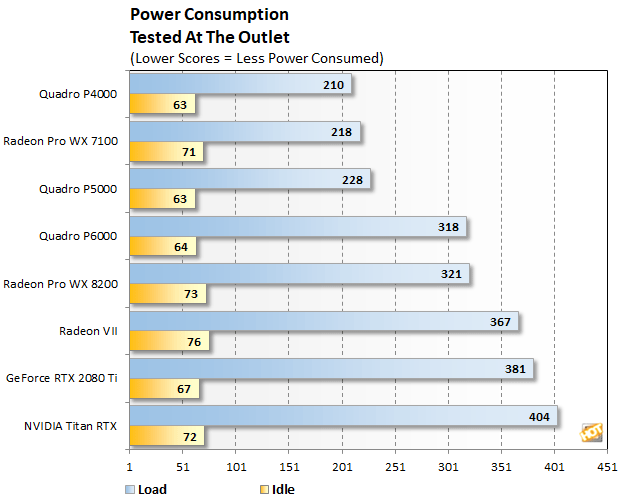

Before bringing this article to a close, we'd like to cover a couple of final data points -- namely, power consumption and noise. Throughout all of our benchmarking and testing, we monitored acoustics and tracked how much power our test system was consuming using a power meter. Our goal was to give you an idea of how much power each graphics configuration used while idling and also while under a heavy workload. Please keep in mind that we were testing total system power consumption at the outlet here, not the power being drawn by the graphics cards alone.

Despite that fact that the Titan RTX had the highest power consumption of the bunch, it is a relatively cool and quiet card. Under load, the fans on the card do spin up to audible levels, but we would not consider it loud or noisy by any means. And as you cans see in the screenshots above, the GPU temperature maxed up below 70'C and never approached the default temperature target.