NVIDIA Jetson AGX Thor Tested: Blackwell Brings Physical AI to Life

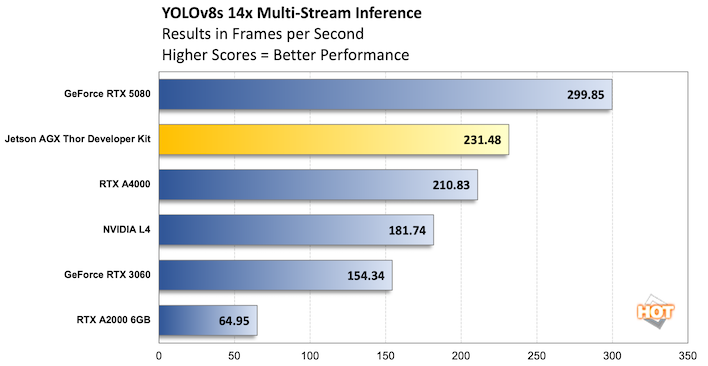

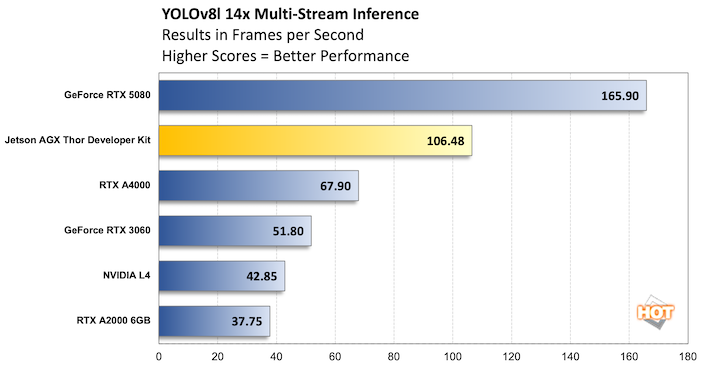

Demos are fun and interesting, but so is writing your own software. Back in June we talked about our own work in computer vision research and performance testing, and it was time to take some of that work and apply it to the Jetson AGX Thor Developer Kit. At the time we had stuck to the ONNX runtime because the goal was to start with a proof-of-concept and evaluate performance and time to a minimum viable product (MVP). That meant ONNX and Tensorflow Lite, for the most part, as they are cross-platform development platforms that plenty of vendors, including NVIDIA, support through C++ libraries and Python/C++ APIs. First let's look at the results, then we'll talk about the application.

We built a basic Python application to take multiple video streams and run simultaneous computer vision across each frame in each stream in real-time, or as close to real-time as you can get with so much video coming through. There's a lot of processing going on, because no AI task is just the model. We decode video at 1080p, resize it for the model (in this case 640x640 with letterboxing). Then the app runs inference on batches of frames (32 at a time, just to keep the GPU as saturated as possible) and reads back the detected objects. Those objects are then annotated on the original video frame and labeled.

Some of this work happens on the CPU, but given the big differences in performance and the fact that our CPU isn't totally saturated, it's not much of a bottleneck. And that continues to be the case here, as the Jetson AGX Thor is faster than the RTX A4000 we'd tested with previously, especially with YOLOv8l. But it's a long haul to get to the absolute juggernaut of the GeForce RTX 5080. Measuring some of this stuff doesn't make a ton of sense in a robotics context where "as fast as possible" inference is usually reserved for the datacenter, but it's helpful to know the relative performance.

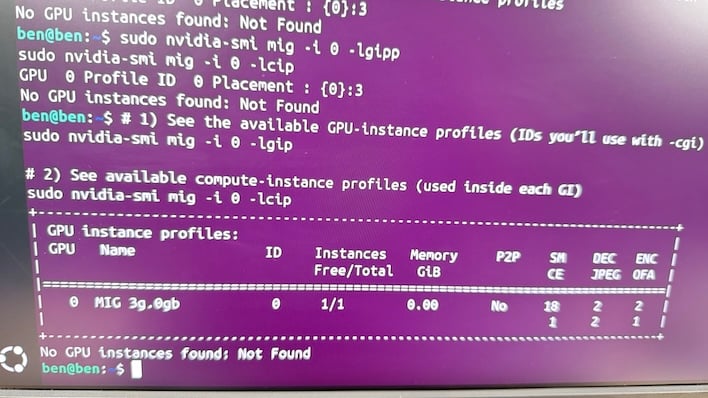

We'd experimented with trying to run multiple models on a GPU at once since there was VRAM to spare, but context switching made that slower than just running a single instance. In theory, that changes with a product like the Jetson AGX Thor (as well as Blackwell GPUs like the GeForce RTX 5080) since the GPU can be sliced up and there's 128 GB of memory on tap, meaning plenty to spare. A single YOLOv8l instance only needs around 5GB to do its work, and one worker wasn't enough to keep our GPU totally saturated, because they'd have to spend time pulling frames of video out of our buffer and start processing them. Two partitions (and perhaps even three or four, since there's plenty of memory) should be faster than one, but we weren't able to devise a way to force it in our short time with the Jetson AGX Thor.

While MIG is supported on T5000, we could only slice the GPU into a single partition, which isn't particularly helpful. It's early days for the Jetson AGX Thor developer kit, and NVIDIA says a software update is coming soon that should help our situation. But as you can see above, MIG or no, the T5000 GPU was more than capable of delivering a lot of performance in computer vision, even accounting for the fact that it had to annotate its work and then re-encode frames of video with those boxes on it.

We're not ready to admit defeat on this, and with software updates coming we're hopeful we'll really get to take GPU slicing for a spin on this thing. Our GeForce RTX 5080 desktop could do it no problem, at least in part because we were using our Core i7-13700K's integrated GPU for drawing the desktop environment, so it really could sit idle. This isn't a review of MIG on an RTX 5080, though, so let's get back to the show.

NVIDIA AI Benchmarking

Along with our custom testing, which did still show the Jetson AGX Thor Developer Kit in a positive light, we could also run NVIDIA's own provided benchmarks. We would have loved to compare to other Blackwell hardware, but the container was provided for Arm64 only. The only other large Arm64 machine in the lab is the Mac Studio and, well, it doesn't have an NVIDIA GPU inside.Unfortunately, our Jetson AGX Orin Developer Kit was unable to run the container as well. That's unfortunate, because NVIDIA publishes numbers for the Orin. Maybe it's just a software update we're missing, but we just ran out of time to troubleshoot this in time for Thor's launch. The solution may just be as simple as re-imaging the machine with the latest available software. We figure that the Orin's approximate RTX 3050 level of performance is just no match for what is roughly a RTX 5070's worth of GPU in the Thor, and NVIDIA's published benchmarks do back that up.

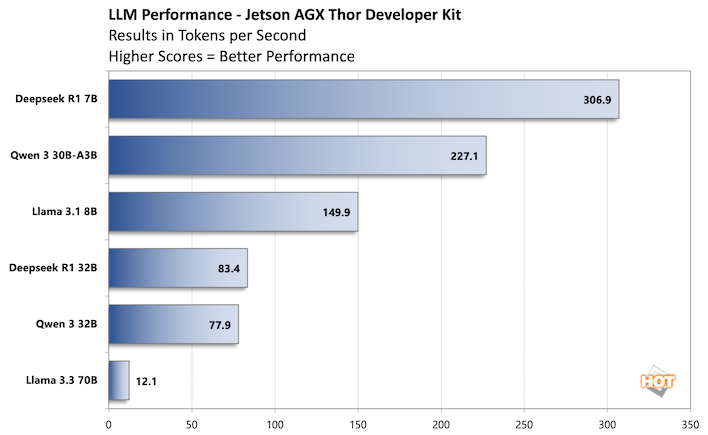

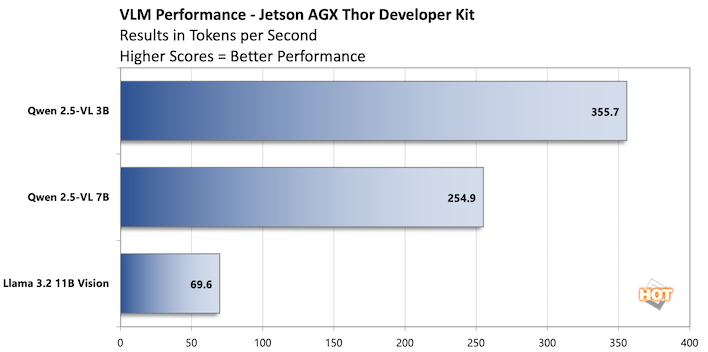

At any rate, here's a peek at what the Thor can do with some large language models.

It's interesting that NVIDIA included a benchmark for a 70-billion parameter LLM, because honestly it's just not fast enough to use a model that large. This is one of the things we discussed when we reviewed the HP Zbook Ultra G1a -- there's a difference between "having enough memory to run an LLM" and "running that LLM fast enough to be useful." Both of those machines definitely had the memory to do what we asked of it, but not the GPU resources to do it at the extreme and of the spectrum.

However, 12 tokens a second is better than we expected, and the performance of nearly 80 tokens/sec on Qwen 3 32B is great. That's more than fast enough for both that and Deepseek R1 32B. LLMs are one area where the Jetson excels, and it needs to since humanoid robots are expected to mix language with visual inputs. That's what a VLM is for. We suspect, that over time, the Jetson AGX Thor's performance will improve with large models like Llama 3.3 70B. NVIDIA's got a solid track record of boosting AI performance over the life-cycle of its hardware, and there's no reason to think the same won't happen again here as its software evolves. It may not improve to the point that running 70B+ parameter models perform well enough to provide a good experience, but the numbers you see here are likely the worst case scenario and will almost assuradely improve.

The Computer Vision tests, in that vein, are way more interesting than the LLMs. Llama 3.2 11B Vision takes visual inputs and outputs language. As we mentioned before, that's exactly the kind of thing an AI robot needs to do quickly. As the models get smaller - even down to just 7B parameters - performance gets much, much faster. 250+ tokens per second in Qwen is what will fuel the very fast 10 Hz reasoning that NVIDIA says robots will need to achieve to be viable in the real world.

NVIDIA Jetson AGX Thor Developer Kit Conclusions

One of NVIDIA's biggest weapons in the AI arms race is, of course, its hardware, but its software, platform integration and its ease of use is also important to highlight. NVIDIA's software stack is laced with cute names that mask some incredibly capable tools. GR00T might make you think of a sentient tree from Guardians of the Galaxy, but it's also a powerful series of models designed for general-purpose robotics. Omniverse and Cosmos work together to generate synthetic training data. Isaac ROS is a robotics operating system to gather sensor data and run the models that control machines in the real world. The software stack is diverse and nearly endless.Fortunately, the hardware that executes that software is also diverse and readily available. In the datacenter, it's the company's powerful DGX platforms and full fat Blackwell GPUs, but at the edge, it's the Jetson T5000 that sits at the heart of the Jetson AGX Thor Developer Kit. This compact, self-contained edge computing device has gobs of horsepower, and thanks to new tricks like multiple-instance GPU slicing, it can multi-task with the best of them.

The price of the Jetson AGX Thor Developer Kit might be the biggest hurdle that developers will run into. NVIDIA's Jetson AGX Orin predecessor was $1,999 for the dev kit back in 2023. Today though, the 130W Jetson AGX Thor Developer Kit we showed you here will set you back a cool $3,499. You do get a lot of hardware and horsepower for the price, though. On top of the powerful Blackwell-fueled SoC, there's 128GB of very fast memory, 1TB of storage, and enough Arm64 CPU core and device IO to do development directly on the box. It's extremely flexible and very fast. And if you're already invested in the NVIDIA AI ecosystem, we suspect a few thousand dollars isn't going to break the bank.

In the end you probably didn't need to read this conclusion to determine your need for a Jetson AGX Thor Developer Kit. If you want to run very large AI models in a friendly multi-tasking environment using NVIDIA's software stack, the Jetson AGX Thor Developer Kit is a great new tool for your toolchest. The good news is that it handles all of those tasks with style and aplomb. And the device will likely get even better over time as NVIDIA continues to refine and update its software stack with additional edge AI capabilities.