NVIDIA GeForce RTX 3080 Review: Ampere Is A Gaming Monster

We also spent a little time overclocking the new GeForce RTX 3080 Super to see what kind of additional performance it had left lurking under the hood. Before we get to our results, though, we should probably give a little background on overclocking Ampere, though. Hint -- It's not much different than Turing...

Overclocking Ampere And The RTX 3080

Ampere-based GeForce RTX 30-series cards, feature GPU Boost just like previous-gen GeForces. GPU Boost scales frequencies and voltages up and down based on the GPU's workload at the time, within predetermined power and thermal limits. Should a temperature or power limit be reached, GPU Boost will drop down to the previous boost frequency/voltage stepping, in an attempt to bring power and temperatures down gradually and not cause and significant performance swings.

As we've mentioned in some of our previous coverage, NVIDIA has segmented the core and memory power rails and significantly tweaked and tuned interfaces on RTX 30-series cards to optimize signal integrity, which should help with overclocking. That said, like previous-gen Turing-based cards, the GeForce RTX 30-series is still power limited to prevent damage and ensure longevity.

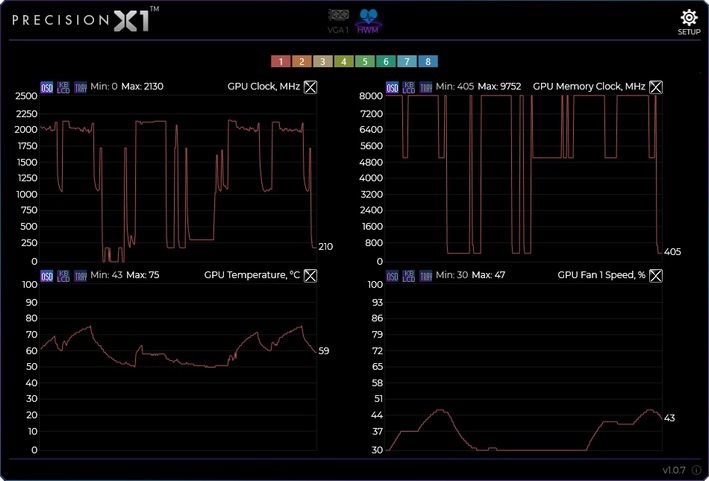

NVIDIA offers an automatic overclocking scanner tool, should users want to generate a tailored frequency and voltage curve for their specific card. But, you can also overclock manually, which is what we did. In lieu of using the NVIDIA Scanner, we kept things simple and used the frequency and voltage offsets, and the power and temperature target sliders available in EVGA's Precision X1 tool, to manually push the card well beyond stock. First, we cranked up the temperature and power targets, and voltage, then we bumped up the GPU and memory clock offsets until the test system was no longer stable, showed on-screen artifacts, or performance peaked due to hitting the power limit. With the GeForce RTX 3080, the power target can be increased by 9%, the temperature target from 83°C to 91°C, and the GPU voltage can be raised by up to .1v.

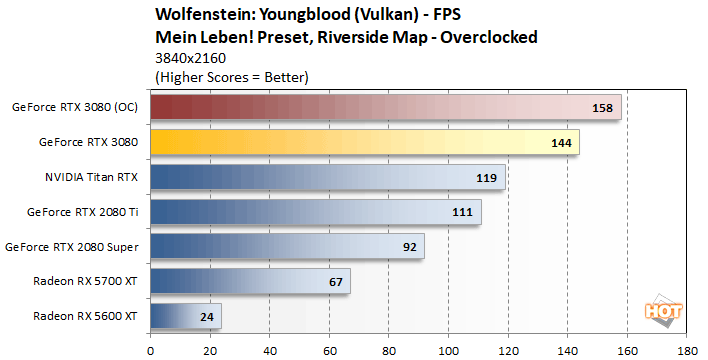

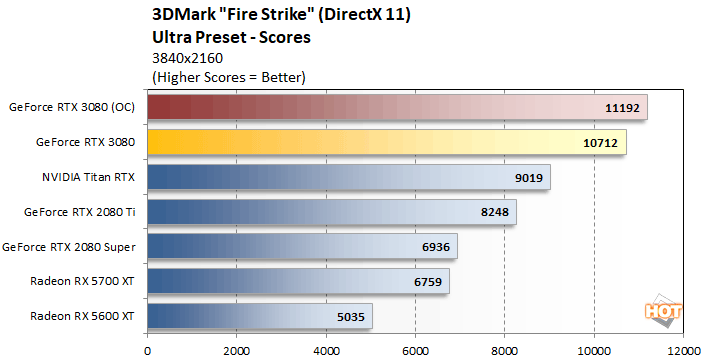

While we had our card overclocked, we re-ran a few benchmarks and were able to take its GPU over 2.1GHz (2,130MHz) and memory to an effective data rate of 19.5Gpbs. While overclocked, we saw some clear performance improvements in Wolfenstein and 3DMark, in the neighborhood of about 10%, which pushed the GeForce RTX 3080 even further out in front of the other cards we tested.

|

|

|

|

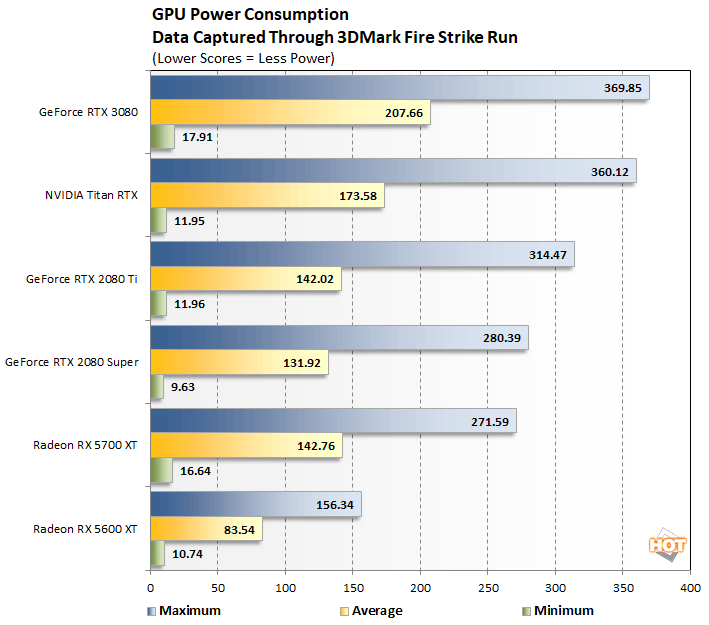

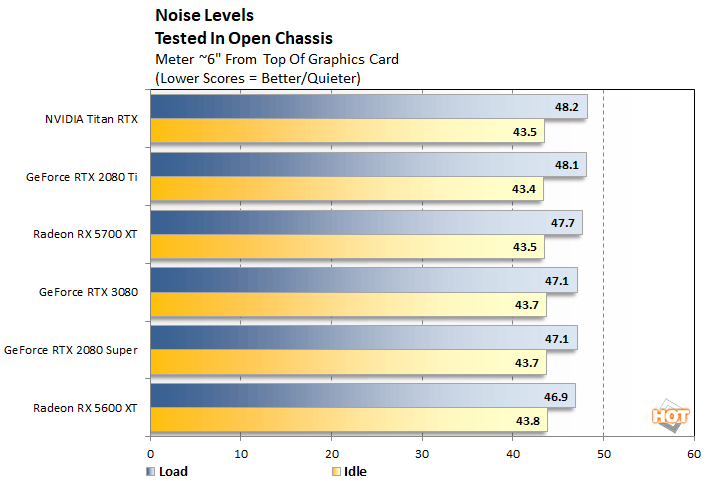

The GeForce RTX 3080's absolute power consumption may be higher than its predecessor, but even with its more demanding power requirements, heat and noise will be non-issues for most users.

In our real-world setup (we test GPU inside a chassis), our test rig output the same sound pressure level with the GeForce RTX 3080 and RTX 2080 Super installed. We measured the sound pressure about 6" away from the top of the GPU, with the side-panel remove from the chassis. The test system has 3 additional fans -- 1 x 120mm exhaust fan, 1 x 120mm fan on the CPU heatsink, and another 140mm fan in the PSU. Overall, the test system is relatively quiet, though the system's fans do drown out most GPUs, at least until they are placed under a sustained load for an extended period.

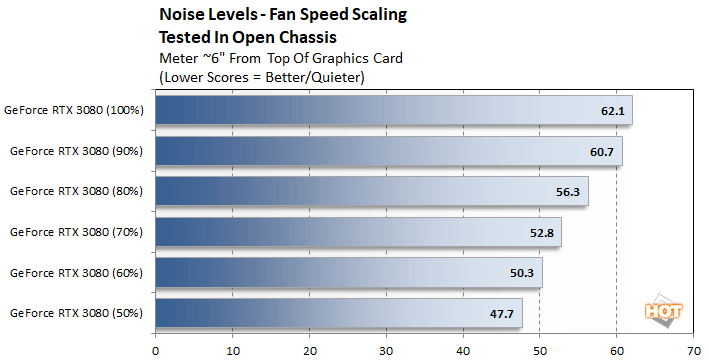

By default, the GeForce RTX 3080's cooling fans will spin up to about 40% of their maximum capacity in typical real-world use, which was barely audible over our test systems case / CPU fans. We did, however, manually crank up the fan speeds to see just how loud the card could theoretically get if temperatures got out of hand. At maximum speed (for both fans), our sound meter measured a peak just above 62dBa. Note, however, that even while heavily overclocked, our GPU temp never exceeded 75°C and the fan speed maxed at only 47%, which again results in noise levels that are below system fan thresholds.

GeForce RTX 3080 Summary And Verdict

Summarizing the new GeForce RTX 3080's performance is as simple as could be -- it is the fastest GPU we have tested to date, across the board, period. Regardless of the game, application, or benchmark we used, the GeForce RTX 3080 put up the best scores of the bunch, often more than doubling the performance of AMD's current flagship Radeon RX 5700 XT. Despite its much lower introductory price, the GeForce RTX 3080 even skunked the Titan RTX and GeForce RTX 2080 Ti by relatively large margins. The bottom line is, NVIDIA's got an absolutely stellar-performing GPU on its hands, and the GeForce RTX 3080 isn't even the best Ampere has to offer -- the upcoming GeForce RTX 3090 is bigger and burlier across the board.

We have been hearing rumblings of Ampere's monster performance for months. Even before CES, a couple of board partners hinted that NVIDIA had lofty goals for Ampere and the company has delivered in spades. The GeForce RTX 3080 is a beast. We suspect peak power consumption is going to be a concern for some users, but in practice, for us at least, it is a non-issue. Thanks to the newly engineered cooling solution, the GeForce RTX 3080 runs cool and quiet in real-world conditions. Sure, your rig might put out a bit more heat, but we suspect most users aren't going to care with a GPU that performs as well as the RTX 3080 does.

Of course, we have yet to see what the GeForce RTX 3090 can do and AMD has announced that is RDNA2-based Radeon RX 6000 series will be unveiled in a few weeks. Looking back through our numbers, "Big Navi" will have to offer more than 2X the performance of a Radeon RX 5700 XT to be in the same class as the GeForce RTX 3080. Could AMD do it? Sure, it's possible. But based on the company's somewhat conservative decisions of the last few generations, we don't think its targets are quite that aggressive. We'll know for sure soon enough though.

Today, the spotlight shines on NVIDIA. The GeForce RTX 3080 is nothing short of impressive. At its expected $700-ish price point (depending on the model), there is nothing that can come even close to touching it. The new NVIDIA GeForce RTX 3080 is an easy Editor's Choice. If you're buying a new GPU in its price range, there is no other choice currently.

|

|