NVIDIA GeForce RTX 3080 Review: Ampere Is A Gaming Monster

After the unveiling and subsequent follow-up briefings, we were obviously eager to get our hands on the GeForce RTX 3080. So, after a brief unboxing and tour when it arrived, we installed it in our test rig and dug right in. On the pages ahead, we’ve got a plethora of data share regarding Ampere, the GeForce RTX 3080, and a bunch of competitors up and down NVIDIA’s and AMD’s stack. And we use the term “competitors” loosely here – when you see the numbers, you’ll realize the GeForce RTX 3080 doesn’t have any competition, for now at least.

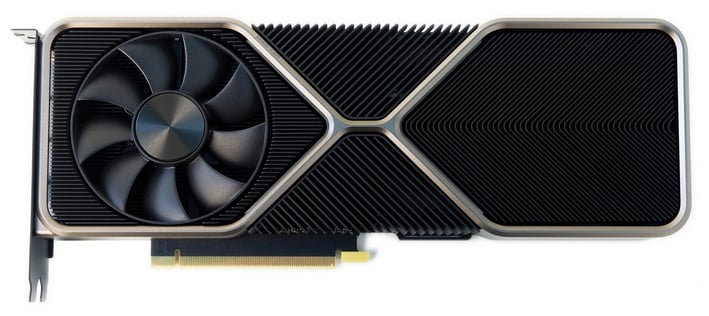

Here’s that quick tour of the GeForce RTX 3080 if you’re the sort that digs video over the written word. However, as attractive as the card might be, the real beauty lies in the benchmarks in our opinion. We better stop jawing and get down to business...

|

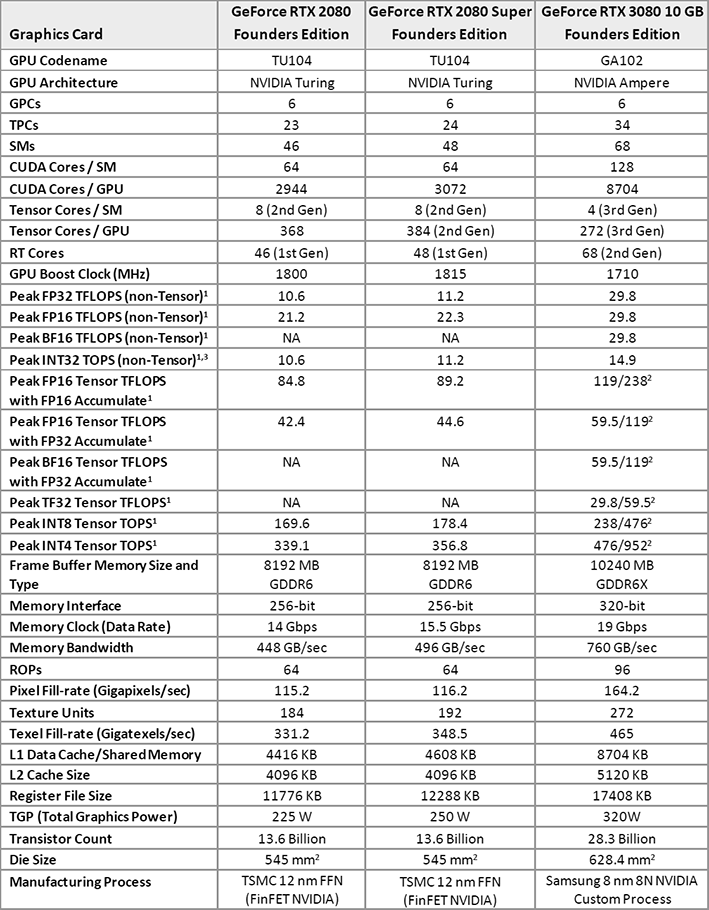

Above is a detailed spec comparison between the new Ampere-based GeForce RTX 3080 and its Turing-based predecessors, the GeForce RTX 2080 and 2080 Super. Before we dig in though, we suggest checking out a few previous articles to lay the foundation for the product we’ll be covering here today. Perusing our coverage of NVIDIA’s initial GeForce RTX 30 series announcement and the deeper dive on its new features and Ampere architecture would be ideal. If you ain’t got time for that though, we’ll summarize much of Ampere’s new mojo again here.

As you can see in the table above, the GeForce RTX 3080 is bigger and beefier than the RTX 2080 / 2080 Super in almost every way, except for two – the RTX 3080 has fewer Tensor cores and a lower default boost clock. The GPUs additional resources are more than enough to compensate for the lower default boost clock, however, and Ampere’s Tensor cores offer more than double the throughput, and support additional types of math. In terms of fillrate, memory bandwidth, and compute performance the GeForce RTX 3080 is significantly more powerful.

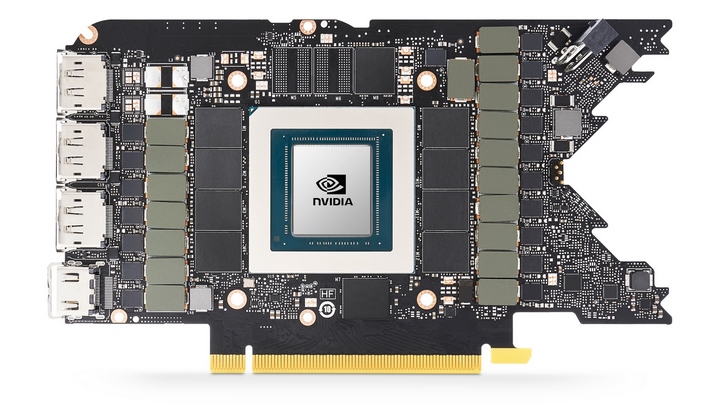

The GA102 GPU at the heart of the GeForce RTX 3080 is packing a 28 billion transistors in its 628.4mm2 die and it is manufactured on a custom, Samsung 8nm process (8N). The previous-gen Turing TA102 powering the RTX 2080 used a 12nm FinFET process and packed fewer than half the number of transistors.

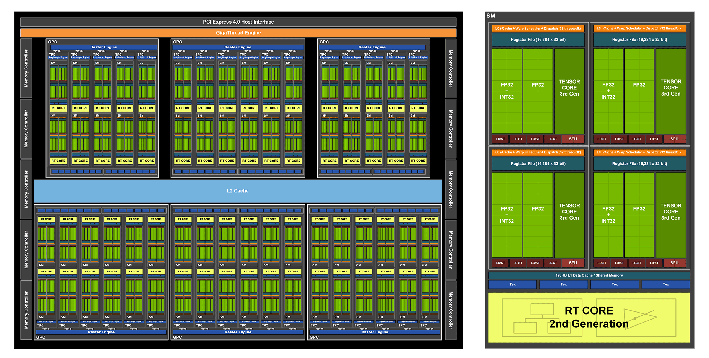

All of those additional transistors were used to enhance Ampere’s performance for essentially every type of workload you can run on GPUs. Previous-gen architectures, before Turing, had only one datapath, for example. With Turing, NVIDIA did add a second math datapath -- one for floating point and, one for integer. But with Ampere, that second Integer path has been further augmented with an additional FP32 unit, so floating point heavy workloads have significantly more resources available.

The 2nd Generation RT cores have been optimized for better performance as well. The 68 RT cores in the GeForce RTX 3080 (up from 48 in the 2080 Super) offer shader performance of up to 29.8 TFLOPS (vs. 11.2 on Turing), RT-TFLOPS of up to 58 (versus 34 RT-TFLOPS in Turing), and the Tensor cores offer up to 238 TFLOPS of Int8, versus 89.

The Streaming Multiprocessors in Ampere have also been tweaked. The new Ampere SMs double the L1 bandwidth and cache partition size and add 33% more L1 capacity, up to 8,704KB. In addition, Ampere’s second-generation RT (ray tracing) cores can process triangle intersection rates at twice the speed of Turing and its third-gen Tensor cores double up math performance for sparse matrices (a matrix in which most of the elements are zero), and support many more math types and precision levels.

While analyzing Turing’s performance characteristics, NVIDIA found that it often had good Bounding Box intersection rates, but Triangle Intersection rates were holding things back. With Ampere, NVIDIA wanted to be able to process Bounding Box and Triangle intersection rates in parallel. So, Ampere breaks out Bounding Box and Triangle resources to run in parallel, and along with the additional GPU resources available, Triangle Intersection rates end up being about twice as fast. A new Triangle Position Interpolation unit has also been added to Ampere to help produce more accurate motion blur effects.

The top-end Ampere-based GeForce RTX 3080 and upcoming GeForce RTX 3090 also feature Micron’s bleeding-edge GDDR6X memory (the 3070 coming next month uses standard DDR6), which offers significantly higher bandwidth. GDDR6X uses new 4-level PAM4 signaling that can transmit twice as much data per clock, effectively doubling bandwidth. High-end Ampere-based GeForces will used GDDR6X memory at up to 19Gbps. On the GeForce RTX 3080, which features a 320-bit memory interface, that means the GPU has up to 760GB/s of bandwidth at its disposal versus 496GB/s on the RTX 2080 Super.

You’ll notice we haven’t talked about SLI or multi-GPU much with Ampere. And that’s because only the GeForce RTX 3090 supports it. The GA102 GPU has a newer 3rd Gen NVLink interface, which includes four x4 links, each providing up to 14.0625GB/sec of bi-directional bandwidth, for a total of 56.25GB/sec of bi-directional bandwidth or 112.5 GB/sec total aggregate bandwidth between two GPUs. Two GeForce RTX 3090s can be linked for operation in SLI, but 3-Way and 4-Way SLI configurations are not supported.

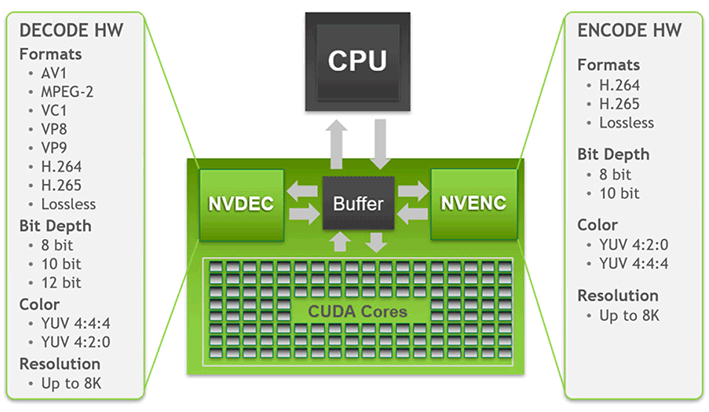

Ampere also features a native PCIe Gen 4 interface and some new video decoding capabilities. The GA102 sports the same 7th Gen NVENC encoding engine as Turing, but has a newer 5th Gen NVDEC engine. NVIDIA’s 5th Gen decoder supports hardware-accelerated decoding of the MPEG-2, VC-1, H.264 (AVCHD), H.265 (HEVC), VP8, VP9, and AV1 codecs. That AV1 support is brand new and makes Ampere the first GPU to support AV1 decoding in shipping hardware.

With such a big, powerful, complex chip, NVIDIA made some major modification in an attempt to increase power efficiency as well. With previous-gen architectures, NVIDIA had one common power rail for both the GPU cores and memory controller. A single-rail design meant that if the cores waned to run at high voltage, the memory had to as well. That changes with Ampere, however. With Ampere NVIDIA has split the core and memory power rails into separate domains, so they can operate independently. The dual power rails should allow for more energy conservation, which ultimately means improved power and thermal characteristics.

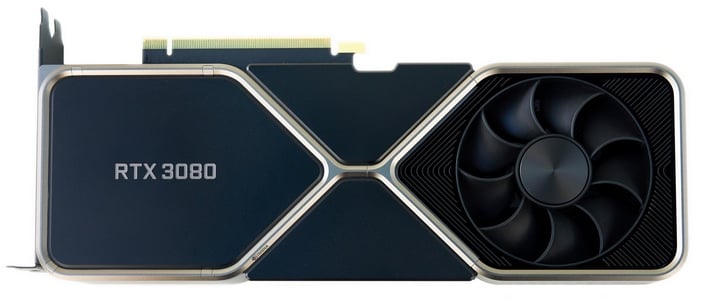

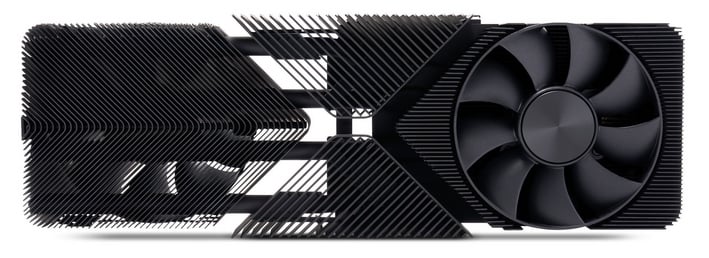

To help ensure the thermal part of the efficiency story is a good one, NVIDIA engineered an interesting cooling solution for its GeForce RTX 30 series cards. The cooler on the GeForce RTX 3080 features dual axial fans, and a split heatsink design that is quieter that previous-gen solutions, while offering the ability to dissipate up to 90 more watts of power. One end of the heatsink array sits directly atop a vapor chamber, that affixed to the GPU and memory. The fan above that section directs air through the heatsink and immediately funnels it out of the chassis through large vents in the case bracket. The heatsink on the back half of the card, however, which is linked to the front vapor chamber via heat-pipes allows air from the second fan to pass all the way through, where it is rises to the top of the chassis and is eventually exhausted, assuming the system’s case has adequate ventilation.

The new push/pull, passthrough cooler on the GeForce RTX 3080 works in conjunction with a denser PCB design that has a unique V-shaped rear edge. NVIDIA’s GeForce RTX 3080 also features a miniaturized 12-pin power connector, though most partner boards we have seen use the traditional PCIe connectors common today. NVIDIA includes an adapter with its RTX 3080 that converts a pair of 8-pin PCIe connectors to the new mini-12-pin design, and we're told PSU manufacturers will be offering modular cables with the new connector as well.

In terms of outputs, the GeForce RTX 3080 has triple full-sized DisplayPorts and a single HDMI output. The USB-C connector on high-end Turing cards, which was meant to facilitate connection to VR headsets, wasn't being used often so NVIDIA nixed it. We should note that that is an HDMI 2.1 port, however. HDMI 2.1 support enables 4K120P with G-Sync on some of the latest OLED TVs and allows for 8K over a single cable.

Even More Features Coming With the RTX 30 Series

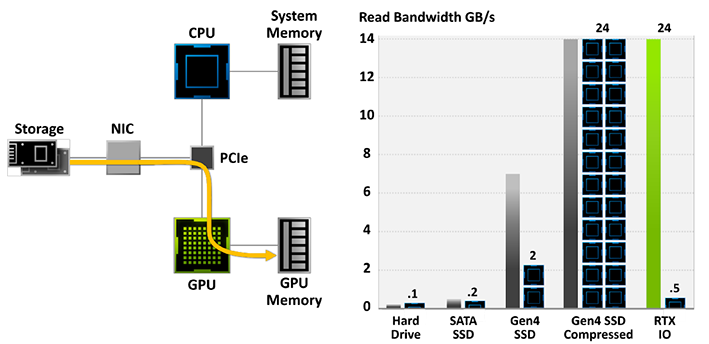

The GeForce RTX 30 series also support a new feature called RTX IO. RTX IO minimizes CPU overhead when processing data from a fast SSDs, and reduce workloads that used to require multiple CPU cores down to a single core, freeing up resources to work on other things. With the latest PCIe Gen 4 SSDs capable of ~7GB/s transfers, transferring data to and from the drives and compressing/decompressing it can require a significant amount of CPU horsepower. RTX IO interfaces with the upcoming DirectStorage API coming to DirectX to overcome this potential bottleneck, by leveraging GPU core resources for the compression and decompression and stream data directly to the GPU memory.

We should note, however, that to use RTX IO and DirectStorage, games will have to be designed to support the feature, and there are no games available at this time. Considering similar technology is coming to both next-gen consoles, however, it’s a safe bet game developers will be jumping all over this one now that something similar will be available on the PC as well.

NVIDIA also announced NVIDIA Reflex during the GeForce RTX 30 series reveal. NVIDIA Reflex is latency reduction technology that’s designed to optimize end-to-end latency, often referred to as input lag or input latency. NVIDIA Reflex streamlines multiple steps along the rendering pipeline to more efficiently make use of CPU and GPU resources, minimize driver overhead, and according to NVIDIA, reduce latency by up to 50%. NVIDIA Reflex Low-Latency Mode is currently being integrated into some esports games, like Apex Legends, Call of Duty: Warzone, Fortnite and Valorant, but others will likely be coming.

NVIDIA Broadcast is coming to RTX GPUs as well. NVIDIA Broadcast is a plug-in that leverages the GPU and AI to enhance audio and video quality, and offer things like noise reduction, camera tracking and virtual backgrounds. NVIDIA Broadcast should be available to download soon and is compatible with any RTX-enabled GPU, both Turing and Ampere.