The State of DirectX 10 - Image Quality & Performance

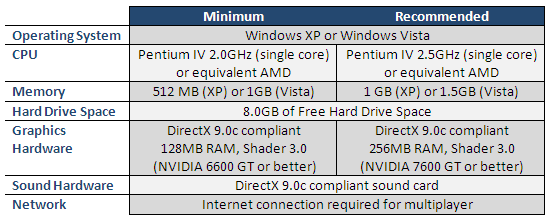

Lost Planet System Requirements

For our benchmarks, all graphics settings were turned up to their highest level. Anti-aliasing was turned on and set to 4X while anisotropic filtering was set to 16X. Vertical sync was manually disabled in-game as well as forced off in the graphics driver options. World in Conflict can be toggled between DX9 and DX10 rendering with an option in the video settings menu, although a game restart is required before changes take effect. Note that we used the demo version of the game for all of our tests.

Like Company of Heroes, World in Conflict has a built-in, in-game benchmark test. The test in the demo version of the game consists of a flyby over mission 3 of the single-player campaign. During the flyby, different graphical aspects of the game are demonstrated while a large battle takes place across the map. We found the results of this built-in benchmark to be a good indication of what typical in-game performance would be like.

For our tests, we ran the built-in benchmark tool five times per resolution, per video card. Benchmark runs that resulted in strange values that did not correlate with the rest of the results were attempted a second time. We then averaged the results for each resolution to obtain our final results.

|

The 8800 GTX remains the overall top performer. For DX9, the 8800 GTS comes in second with the 2900 XT in a close third, however the situation is reversed in DX10. The 8800 GTX was playable at all times and we didn't notice any slowdown, even at 1920x1200 with DX10 rendering turned on. While the 8800 GTS and the 2900 XT don't perform quite as well, often dipping below the 20FPS mark, they both remained fairly playable at all resolutions in both DX9 and DX10.

Unfortunately, our two mid-range cards didn't do very well and posted unplayable results in both DX9 and DX10 with the settings we chose. Both mid-range cards were unplayable in both DX9 and DX10, although the 8600 GTS was able to hum along at an average of 18FPS in DX9 at 1280x1024 and was playable, although prone to stutters and sharp frame rate drops. We'd like to remind you again that our results were created with the game in its highest image quality mode. It is entirely possible to play World in Conflict smoothly on both of our mid-range cards when using lower video settings.