NVIDIA Unveils Vera Rubin Platform And Groq 3 Integration to Power Agentic AI Factories

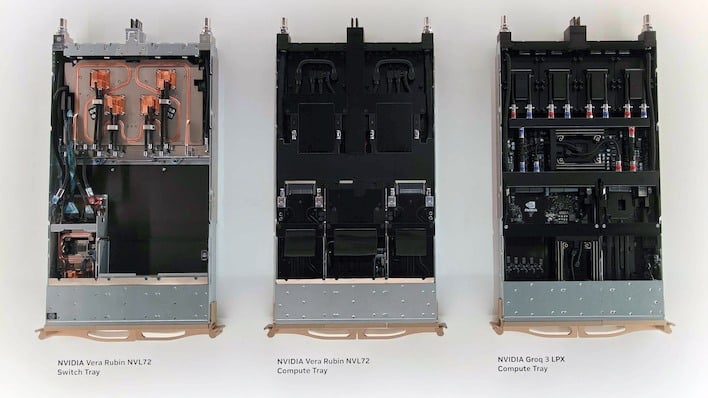

According to NVIDIA, the Vera Rubin NVL72 rack integrates no less than seven brand-new chips, including the Vera CPUs, Rubin GPUs, NVLink6 switches, ConnectX-9 NICs, BlueField 4 DPUs, Spectrum-X NICs, and Groq 3 LPUs. All in all, NVIDIA claims that all this silicon adds up to an order of magnitude improvement in performance per watt over Grace Blackwell, which is quite a thing if true.

NVIDIA Launches Diverse Vera Rubin Ecosystem At GTC 2026

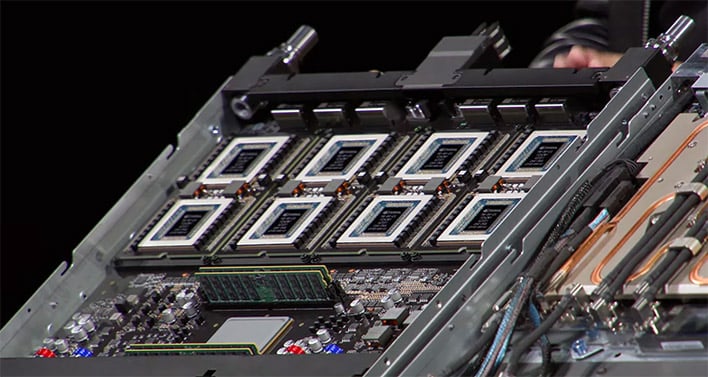

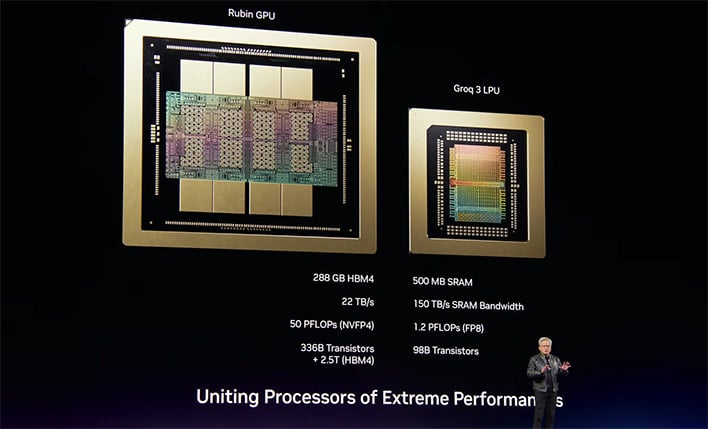

Despite initially planning to name the systems after the number of GPU dies, that apparently would have been too large a departure from the previous nomenclature, so we're back to counting GPU packages now; thus, NVL72. The company says this configuration is designed to deliver breakthrough efficiency, claiming it can train large mixture-of-experts models in the same time using one-fourth the number of GPUs compared to its previous Blackwell platform.Alongside this, NVIDIA introduced the Groq 3 LPU and the LPX rack. The Groq 3 LPU (Language Processing Unit) is a relatively modest chip in terms of compute, with 1/25th the performance of a Rubin GPU. However, it has a massive 500 MB SRAM cache, which has 150 TB/second of bandwidth, absolutely dwarfing even the 22 TB/sec of Rubin. This gives it a particular advantage in low-throughput but low-latency workloads.

The Groq 3 LPU is mostly featured in the Groq 3 LPX rack, which features 256 LPU chips and is designed specifically for low-latency, large-context agentic systems. NVIDIA states that when deployed together with the Vera Rubin NVL72, the combined processors can deliver up to 35x higher "tokens per second per megawatt" versus Blackwell.

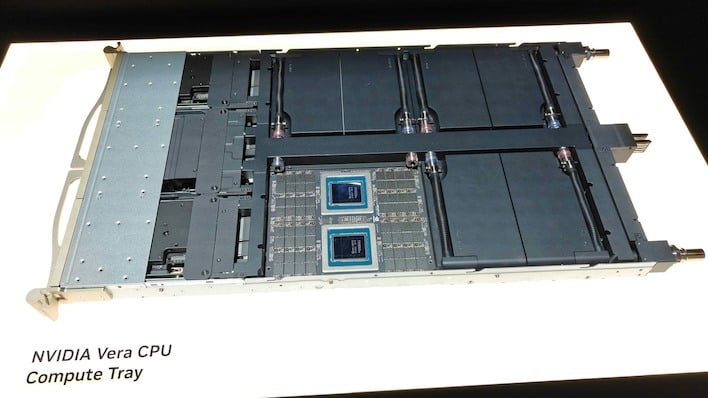

NVIDIA also officially launched its Vera CPU today, framing it as the first processor purpose-built for reinforcement learning and agentic AI. According to the company, the CPU features 88 custom NVIDIA-designed "Olympus" cores. NVIDIA claims the Vera CPU delivers results 50% faster and with twice the efficiency of traditional rack-scale CPUs, by which it means x86-64 chips.

NVIDIA Vera CPU-Only Rack Debuts At GTC

Alongside the launch of the chip itself, NVIDIA's also debuting a new Vera CPU-only rack configuration that integrates 256 liquid-cooled CPUs. The company states that this rack can sustain over 22,500 concurrent CPU environments running independently at full performance, which is very impressive if the system can achieve that in real-world workloads.

Enter A New AI-Optimized Storage Solution: BlueField-4 STX

To handle the data requirements of these massive clusters, NVIDIA detailed its BlueField-4 STX rack-scale system. According to NVIDIA, traditional general-purpose storage lacks the responsiveness needed for seamless interaction with AI agents. The STX system aims to solve this by extending GPU memory across the pod, providing a high-bandwidth layer optimized for the key-value cache data generated by large language models.

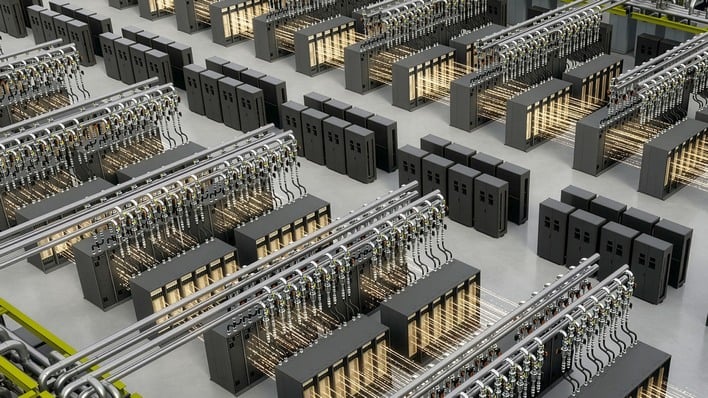

Acknowledging the complexity of building these massive data centers, NVIDIA is introducing the Vera Rubin DSX AI Factory reference design. The company also announced the general availability of the NVIDIA Omniverse DSX Blueprint. The blueprint allows developers to build physically accurate digital twins of the gigawatt datacenters that NVIDIA has termed "AI factories." According to NVIDIA, this enables companies to simulate operations and optimize performance before construction even begins. The system includes software like DSX Flex, which NVIDIA claims will allow datacenters to dynamically adjust power use based on demand to maintain grid stability.

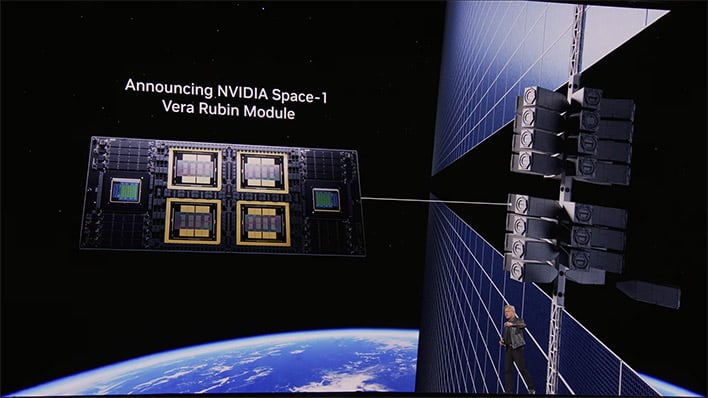

Finally, NVIDIA says it is taking its accelerated computing platforms to space. The company announced the Vera Rubin Space Module, which it claims delivers up to 25x more AI compute for space-based inferencing compared to its previous H100 GPU. This module is designed for size, weight, and power-constrained (SWaP) environments to support orbital data centers and autonomous space operations.

According to NVIDIA, Vera Rubin-based products and the new Vera CPU will be available from its partners starting in the second half of this year.