NVIDIA Shows Blackwell Slashing AI Inference Costs By 10X With Open Models

In a post titled "Leading Inference Providers Cut AI Costs by up to 10x With Open Source Models on NVIDIA Blackwell," the company's Shruti Koparkar goes over a few examples of how companies using AI in the Healthcare, Gaming, Agentic Chat, and Customer Service industries have reduced their costs by purchasing cutting-edge Blackwell GPU hardware and then running open-source AI models on it, rather than using older Hopper hardware, or worse, paying API fees to run their own services on somebody else's hardware.

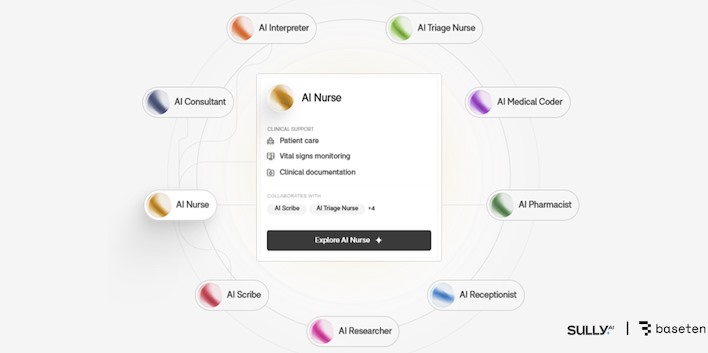

Actually achieving that "10×" number is non-trivial, though. Sully.ai, which builds "AI employees" for clinical documentation and medical coding, reported a 90% reduction in inference costs after moving from proprietary, closed-source models to open-source models running on Blackwell GPUs via Baseten's Model API.

NVIDIA attributes this improvement not just to the new hardware, but to an aggressive stack of optimizations: switching to the low-precision NVFP4 format, using TensorRT-LLM and the Dynamo inference framework, and tailoring the deployment for Sully.ai's highly predictable, real-time clinical workloads. In other words, Sully.ai didn't just swap GPUs; instead, it rebuilt its entire inference pipeline end to end, making it something of a best-case scenario for NVIDIA's tokenomics pitch.

Other operators found smaller gains, though it's difficult to imagine any company would complain about a 75% reduction in cost per token, which is what AI Dungeon developers Latitude achieved by switching to Blackwell (from Hopper) and quantizing its model down to NVFP4. Normally a quantized model will offer inferior results, but NVIDIA specifically notes that Latitude "maintained the accuracy that customers expect."

Sentient Labs, operators of the advanced Sentient Chat, as well as Decagon, the creator of those often-cursed AI-powered agents for enterprise customer support that are showing up on every site, both saw similar results. The key takeaways from each example are basically this: switching to locally-owned Blackwell GPUs running open-source or in-house-trained models specifically optimized for NVIDIA's architecture saw cost per token cut by half or more.

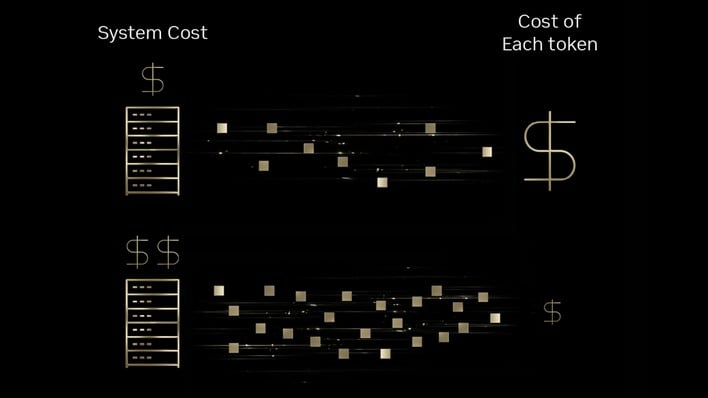

NVIDIA does show—particularly in the slide above—that Blackwell machines are more expensive than Hopper systems, and as a result, companies' up-front cost (CapEx) will spike. The expectation is that the hopefully-radical improvement in operational costs (OpEx) will offset the up-front expenditure. Given how many companies have struggled to capitalize on AI investment, we're not completely sure this reasoning is supported by data, but it's sensible enough.

The real interesting take-away from NVIDIA's post is actually the claim that open-source models "have now reached frontier-level intelligence." It's definitely true that the latest open-source models are capable of incredible things. The "frontier-level" equivalence claim is a little suspect, though. NVIDIA mentions Sully.ai making use of the gpt-oss-120b open-source model, which we've tested. While it's impressive for what it is, and it might be "good enough," it's certainly not competitive with the latest proprietary models from OpenAI, Google, or Anthropic.

Of course, that's just one example, but the trend holds in other areas, like image and video generation. The open-source LTX-2 video model can do amazing things, but it's absolutely nothing compared to Bytedance's new Seedance 2.0 model, which features unbelievable quality and strong prompt adherence. NVIDIA said back at CES 2026 that the gap was closing between open-source models and frontier proprietary models, and that's a much more defensible claim, but we don't think we're quite there yet.

In any case, it doesn't take a genius to come to the conclusion that self-hosting is cheaper than paying for someone else's compute, and also that using the latest hardware with the formats and frameworks it was co-designed for offers improved efficiency and performance. Individual vendors will have to do the math to see if upgrading to Blackwell after just one generation (from Hopper) is really worth it, though. After all, NVIDIA closes off its blog by promising another 10x drop in cost-per-token with the next-generation NVIDIA Rubin platform. Maybe it's better to wait for those machines and realize a 100× improvement in "tokenomics"?