NVIDIA Unveils DLSS 3.5 With AI Ray Reconstruction And Not Just For RTX 40 GPUs

Anyone who has played a path-traced game, like Cyberpunk 2077 in "Ray-Tracing Overdrive" mode or even a game with extensive RT effects like Dying Light 2: Stay Human, will be instantly familiar with ray-tracing denoising artifacts. It's ghosting of the worst sort, like your monitor is an MVA LCD from 2006. Objects leave visible trails behind them and lighting effects can take multiple frames to update the scene, which can be upwards of 100+ milliseconds if your framerate is low—easily within the threshold of "noticeable".

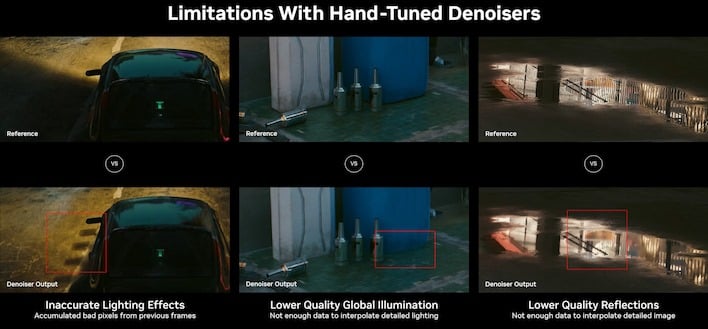

NVIDIA says that if you load up a game like Cyberpunk 2077 right now, a lot of the work that the ray-traced renderer is doing is actually being discarded by the denoisers, particularly in terms of global illumination and reflections. Because the denoisers are trained to reduce noise, highly-detailed effects that only appear on small regions of the screen look like noise to the relatively-simple algorithm trying to generate a coherent image out of the ray-traced noise.

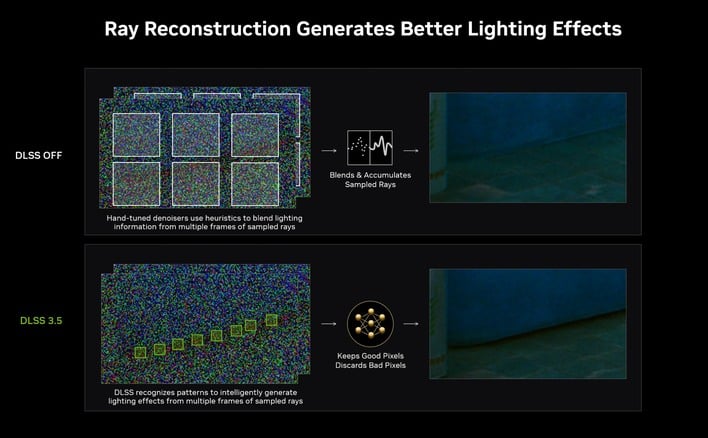

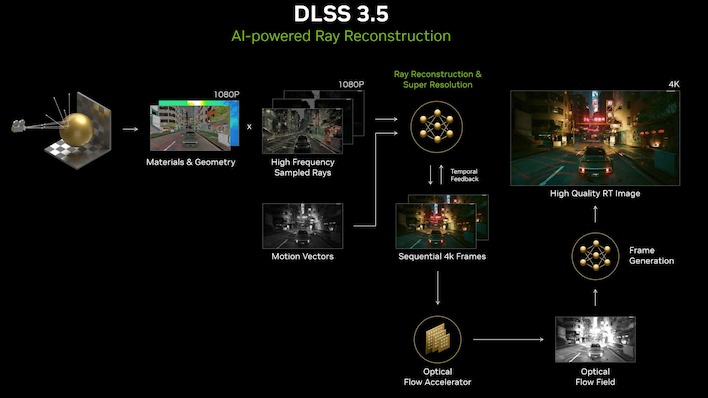

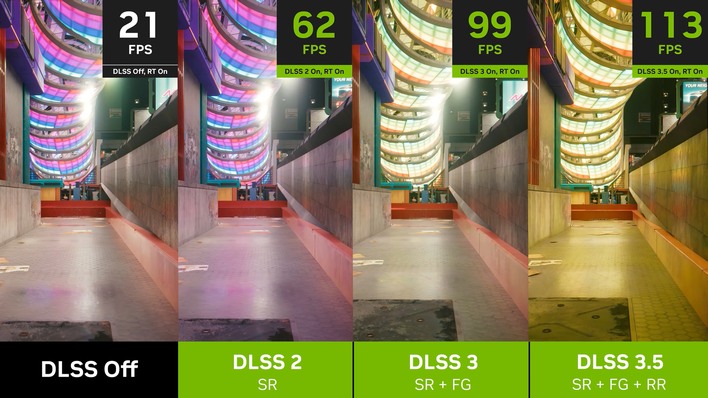

Well, NVIDIA just announced the solution to these woes: DLSS 3.5, now with a new feature called Ray Reconstruction. Put simply, it's like DLSS super resolution, but specifically for ray-tracing. If that confuses you, understand that we're talking about the process of compositing the scene before upscaling. In other words, instead of using a slower and more taxing denoiser to achieve better results, NVIDIA is side-stepping the whole process by replacing the collection of hand-tuned denoisers currently used for ray-tracing with an AI model that does it better and faster.

The AI denoiser trained by NVIDIA—apparently on five times more data than the model used for DLSS 3—is smart enough to recognize even small areas of shading and lighting, and as a result, ray-traced scenes using it are apparently sharper, more accurate, and less prone to ghosting artifacts (at least judging by NVIDIA's demo footage in its video about the technology).

By the way, Ray Reconstruction isn't just for video games. The company also demonstrated a sample of the technique at work in the popular D5 Render ray-tracing kernel, used in apps like 3D Studio Max, Blender, Cinema4D, and others. While you wouldn't necessarily need this technique for an offline render—you could instead choose to simply cast thousands of times more rays per pixel—it does give a much more accurate preview of what your scene will look like while you're working on it.