NVIDIA CEO Declares Moore's Law Dead And Details GeForce RTX 40 Series Cost Challenges

The 2015 International Technology Roadmap pinned 2021 as the year when miniaturization would hit its limit. While further progress can arguably be made, it becomes economically infeasible to do so. Now that we are 2022, it is arguable whether we have quite reached this point. For its part, Intel believes it has ways of extending Moore’s Law into the Angstrom Era, using techniques like the stacked forksheet transistor.

NVIDIA is not quite so optimistic about beating the limits of Moore’s Law but has some other tricks up its sleeve. We had the opportunity to ask NVIDIA Founder and CEO, Jensen Huang, about his thoughts on process tech in the wake of GTC 2022 and the launch of the Ada Lovelace GeForce 40-Series graphics cards.

NVIDIA CEO Reflects On Process Tech

Dave Altavilla, our Editor-in-Chief, asked, “How impactful was the move from Samsung 8N to TSMC 4N? It seems TSMC 4N was a major advantage for you [NVIDIA]. I just wanted to understand how process plays in the success of Ada Lovelace, because it’s an impressive chip.”The response from Huang was that gains were surprisingly modest. “Processed generationally from 8N to 4N, that process gain was probably about 15%. But unfortunately, the cost goes up by more than 15%,” he explained.

While he did not elaborate on just how much cost increased, he did liken Moore’s Law to a transistor density versus cost curve that historically had been going up. He stated, “But for the very first time, starting with about, I think around seven nanometer, yeah, around seven nanometer, the curve actually turned down. It didn’t flatten out. It turned down.” The NVIDIA CEO then just put it bluntly, noting “Moore’s Law is dead.”

As process tech has approached physical limits, the intricacy of production has dramatically increased. Jensen explained that its chip development is accomplished in a series of steps, with approximately one step completed in fab per day. Current cycle times have ballooned to around four months from start to finish.

“It’s not because TSMC is trying to capture more profit. That’s just not true,” he assured, “That’s a lot of steps. And so, you could say that the equipment’s more expensive, it takes more time in the fabs.”

“So, You Have To Find Other Ways To Do It.”

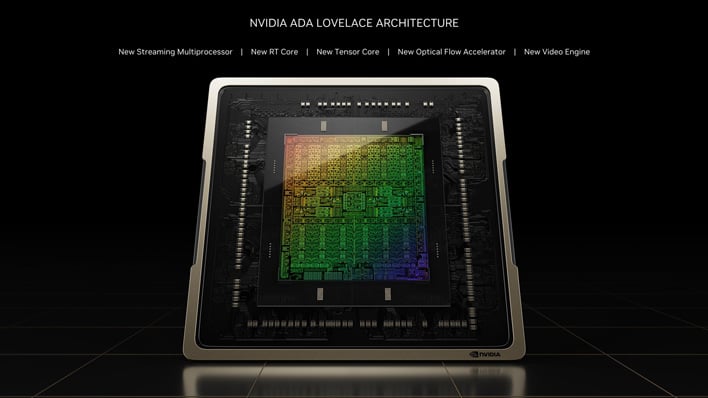

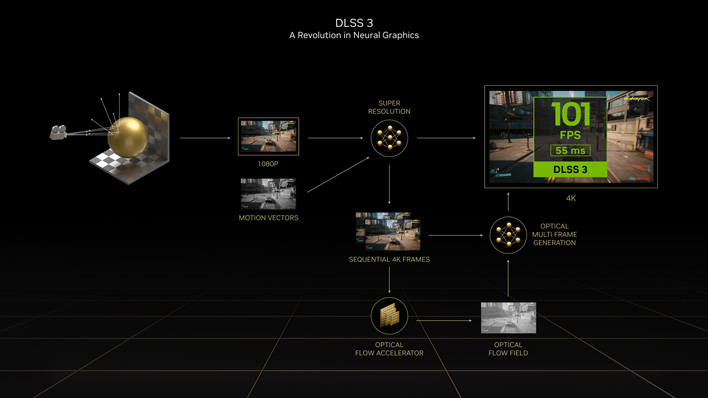

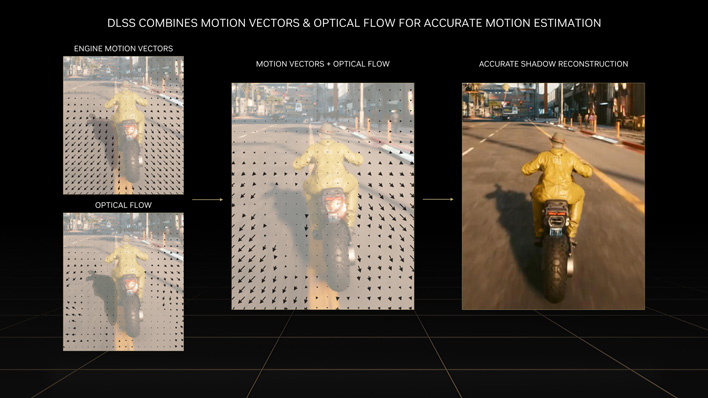

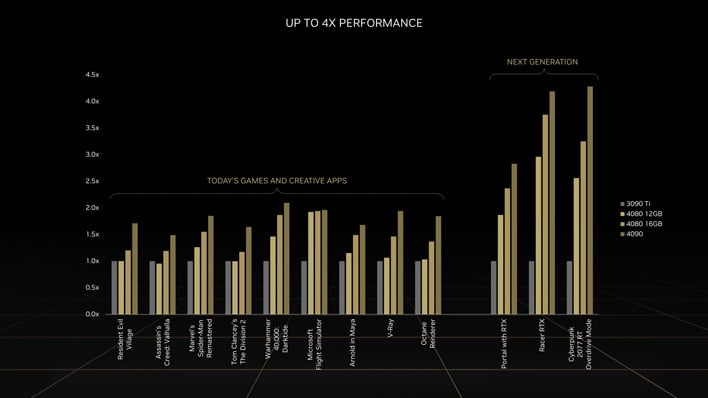

Huang explains that, “the way that we solved it, Dave, with Ada is architecture.” Ada combines multiple architectures—CUDA, Tensor, and RT. While traditional progress has been made via CUDA for rendering and rasterization, Jensen noted that Tensor and artificial intelligence are the “giant lever” for Ada.“I would say that DLSS produces a better-looking pixel than GroundTruth, than the rendered pixel that we computed in the past,” elaborated Huang, claiming objectivity. He continued saying, “And the reason for that is because DLSS learned that pixel, not by computing, it learned from a 16K image. Not a 4K resolution image, a 16K resolution image. And so the pixels that it learned from were beautiful. There were no pixels. It’s close to raw. And so we had AI learn the colors to put in there, and it puts in a better looking color.”

Interestingly, NVIDIA's technology advancement with DLSS 3 does also, in a way, mark a reduced need for pure GPU rendering, at least when graphical demands are high. The CEO has asserted that artificial intelligence does it better. “It’s sufficiently subtle that in my opinion, it’s better than GroundTruth,” he stated, “It’s definitely different than GroundTruth, but it’s better than GroundTruth.”

To accomplish this, graphics rendering has transcended a set of operations running in individually siloed machines across the world. “Ada is generating computer graphics, not just at the moment. There’s a supercomputer in the background that was training the neural network,” says Huang, “And so in a lot of ways, Ada is Ada plus the supercomputer we have. And that’s the future. That’s the future, and that’s the reason why computer graphics is so amazing.”

Tensor Cores Rise To Prominence, As Did AI

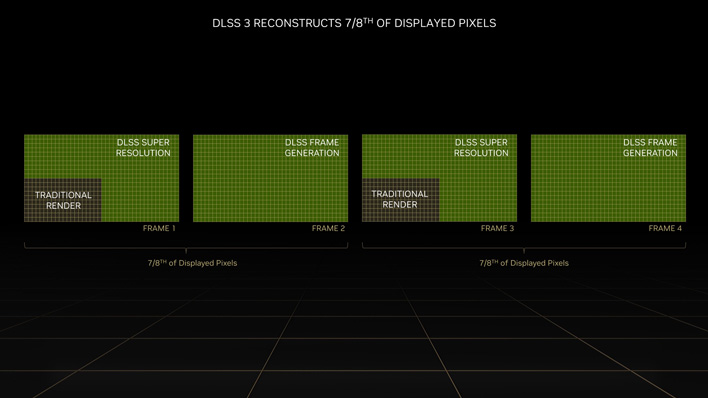

Huang went on further to note that, “...the combination between the supercomputer for priority training and coding the knowledge of pixels into the neural network and then processing the neural network, which is structurally really good for tensor core processing, we effectively multiply the throughput of our GPUs by a factor of eight. Well that more than overcame the weakness of Moore’s Law.”Artificial Intelligence Arrived Just In Time

Speaking about DLSS 3 specifically, Huang reflected, “Without the CPU even doing anything, we generated an extra frame. It’s not predicting the future yet, but it’s close and it predicted an extra frame without the CPU ever having to touch it.” If these frames are accurate and look just as good as traditional rendering—if not better—then we are excited to see what advancements this brings in the future.“Well, we just doubled the frame rate, but the neural network is doing all the work. And so, I think we have to overcome the weakness that we’re at the end of Moore’s Law, not by giving up, but by coming up with a lot more clever techniques, and thank goodness artificial intelligence came just in time.”

Where Do 3D Graphics Go From Here

Some have been resistant to the advent of neural networks and a shift way from the “ground truth” of raw GPU rendering in general. To that end, we could ask what the purpose of a graphics card is—Is it not to generate as much pleasing eye-candy as possible?Yes, the way this has been accomplished historically is through rendering but it is not the only path forward. Our desires alone cannot beat physics. While AI upscaling techniques have had a shaky start with some peculiar artifacts and quirks, the technology has advanced and improved rapidly and dramatically. And of course, we can't pretend that traditional rendering has been immune to issues either.

The unfortunate consequence, as alluded to in the process tech discussion, is that costs have increased. Hitting the Moore’s Law wall hurts, but AI could likely provide the avenue forward and back to lower cost solutions, eventually. For those who expect or depend on “pure” rendering and rasterization though, the way ahead will likely only become more expensive.