Micron May Stack GDDR Like HBM For A Major Graphics Memory Capacity Boost

If accurate, this move would likely be a direct response to the diversifying needs of AI accelerators. While GDDR is traditionally the preserve of high-performance gaming graphics cards, its inherent bandwidth advantage over standard system memory (DDR5) has led to its adoption in cost-sensitive AI inference hardware. Inferencing, the actual execution of AI models (versus training, creating the models), requires massive capacity to hold variables like the Key-Value (KV) cache, but does not always demand the extreme speed of HBM, which is really optimized for the much more bandwidth-intensive model training.

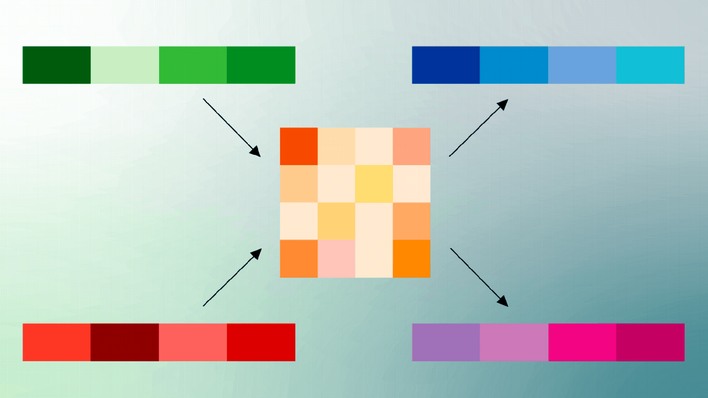

By stacking the modules, Micron hopes to bridge the gap between flagship HBM and standard GDDR. This new architecture would offer significantly higher capacity than traditional GDDR, giving AI clients the density they crave without the flagship price tag. The report notes that this would allow Micron to capture a "middle ground" in the market, appealing to both AI clients and the continued growth of high-performance gaming. However, the commercial viability of this project faces significant technical hurdles; specifically, heat management and power consumption alongside a major new software variable that just upended the market: Google's TurboQuant.

Last week, Google unexpectedly unveiled TurboQuant, a breakthrough compression algorithm that can reduce the memory footprint of Large Language Models (LLMs) by up to 6x without significant accuracy loss. This software innovation drastically minimizes the physical RAM needed to run complex AI.

This raises a critical question: If software can already reduce AI's hunger for memory by 6x, will expensive hardware bottlenecks like stacked GDDR still come to market? The report acknowledges that technological hurdles and cost management are crucial variables. It is possible that software advances could reduce the necessity for niche hardware "middle grounds" before Micron can even get a sample to market.

Conversely, Jevons' Paradox suggests that as efficiency lowers costs, total demand paradoxically explodes; Micron may still gamble that stacked GDDR will be the ideal solution for bringing ultra-high-density storage to the "edge," making massive, efficient local AI feasible where HBM remains too expensive. Even outside the high cost of production, HBM requires excruciatingly expensive integration and co-fabrication with the chips that use it. Micron's purported new project, assuming ETNews is correct, could be exactly what the industry needs.