Google's Gemma 4 Open Models Target On-Device AI From Phones To GPUs

Specifically, Google says that Gemma 4 31B Dense and the Gemma 4 26B Mixture-of-Experts model offer "frontier intelligence," claiming that these two relatively-small models can outperform GPT-OSS-120B, Qwen3.5-122B, and Mistral-Large-3 in terms in like-for-like, head-to-head comparisons. That's impressive stuff considering that those models have considerably more parameters and likewise will require much more hardware to run.

Not that those Gemma 4 models are exactly lightweight; Google notes that the unquantized bfloat16 weights "fit efficiently on a single 80GB NVIDIA H100 GPU." That's all fine and well for folks who have such hardware, but folks using "meager" 32GB and 24GB gaming GPUs will have to settle for quantized versions that necessarily lose some performance. Still, if Google's remarks about these models offering "state of the art performance for their size" are accurate, they should be quite good even in a quantized state. Google says they can support "up to a quarter-million tokens context window," which is absolutely massive, but will depend on your hardware.

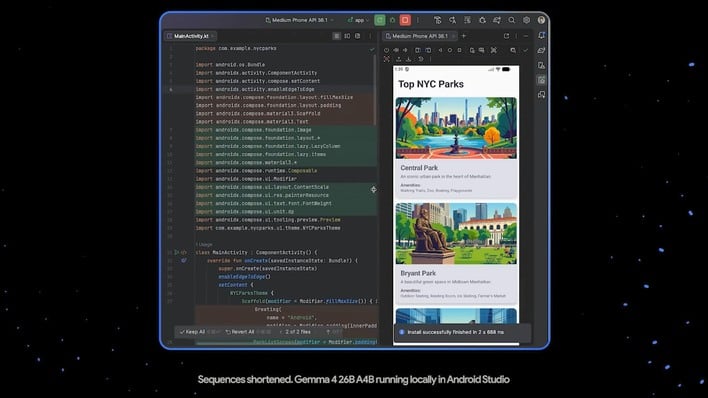

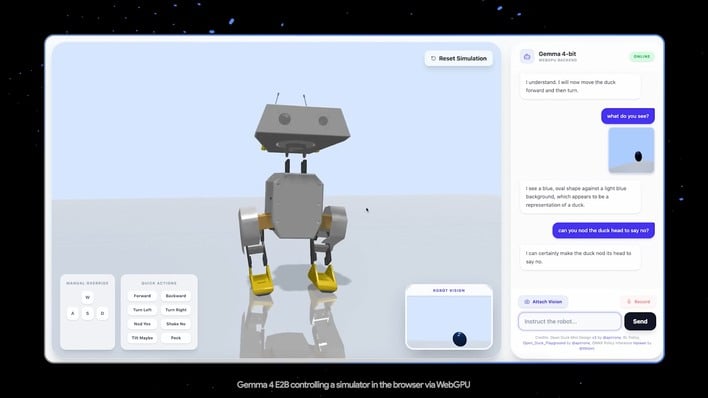

For smaller devices and more specialized use cases, Gemma 4 also includes "Effective 2B" and "Effective 4B" models. It's not completely clear exactly what "Effective" is meant to imply here, but these models retain full multimodality (meaning they can natively work in images, text, and audio) while being small enough to run on devices like phones and single-board computers, apparently with "near-zero latency".

Arguably the most notable thing about these models is their license, though. Google's released Gemma 4 under the Apache 2.0 license, which is not literally "do whatever you want," but it's pretty close. Most importantly, it's "commercially permissive," as Google puts it, which means you can use these models for your own projects that make money without worry about Google coming after you for it.

If you're keen to try out the Gemma 4 models, you can get them from HuggingFace, Kaggle, or Ollama as usual, but if you just want to fool around a bit, you can also try them on on Google's AI Edge Gallery for iOS and Android. Head over to Google's blogs for more information about that as well as links to the downloads.