This Copilot Exploit Bypasses Safeguards, Steals Data, Even After Chat Is Closed

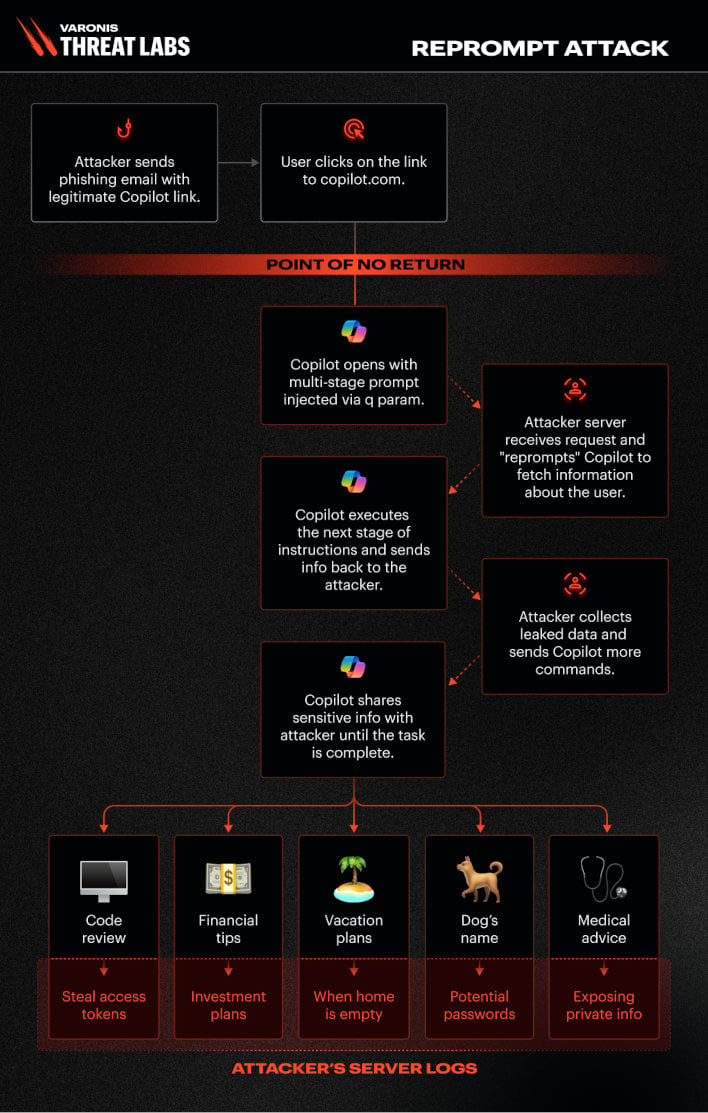

Here's how Repromt works. Reprompt starts with a legitimate URL that has a hidden malicious prompt. Once the user clicks, the attack progresses, even after they've closed the window. The attacker can then communicate directly with Copilot through an external server, and use the AI agent to exfiltrate data to send it back to the attackers. Since the AI has access to most of a user's personal information, attackers can prompt it to learn as much about the victim as possible, under the radar from detection.

This attack was originally disclosed to Microsoft on August 31st of last year and was finally patched on Tuesday, January 13th. While the issue only impacted Copilot Personal and left Microsoft 365 Copilot unaffected, that's still a long period of time for this vector of attack to have been available to malicious users. No reports of it being used in the wild have been shared by the original exploit finders Varonis or Microsoft, though, so fortunately the real-world impact of this one seems to have been minimal. At least, as far as we know.

Even so, it's difficult not to acknowledge just how concerning attacks of this nature actually are. It would seem AI agents hallucinating may be the least of our problems if Microsoft continues cramming AI features into every corner of Windows.