Google Detects First AI-Developed Zero-Day Exploit Used by Threat Actors

The most significant milestone is the discovery of the first publicly documented zero-day exploit built with AI assistance. A criminal group deployed Large Language Models (LLMs) to identify a high-level semantic logic flaw in a popular open-source system administration tool, whose name Google chose to withhold. By weaponizing the model to generate a Python-based exploit for a two-factor authentication bypass, the actors demonstrated that AI excels at spotting hard-coded trust assumptions that traditional automated tools might miss. While Google says the proactive counter-discovery may have prevented the mass exploitation, the event confirms that AI has drastically compressed the timeline between vulnerability discovery and weaponization.

The report highlights PROMPTSPY, considered by many to be a groundbreaking Android malware strain. Unlike traditional payloads, PROMPTSPY integrates directly with generative AI APIs during execution. Its "GeminiAutomationAgent" module captures the device's UI, sends it to the AI for analysis, and receives real-time commands (swipes, taps, navigation). This allows the malware to operate semi-autonomously, even blocking "Uninstall" buttons with invisible overlays and replaying biometric lock screen gestures.

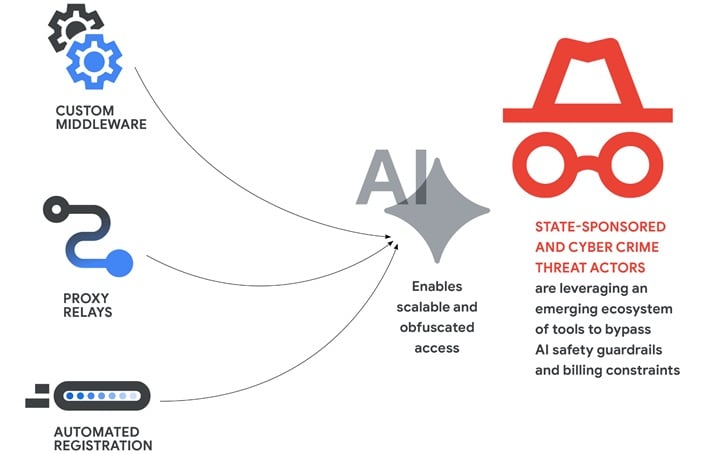

Nation-state actors have moved beyond curiosity into scaled exploitation:

- North Korea (APT45): Used recursive prompting to industrialize CVE analysis and validate proof-of-concept exploits.

- China (UNC2814 & APT27): Employed "expert-persona" jailbreaking to research firmware flaws and accelerated the development of relay networks to anonymize attack traffic.

- Russia: Deployed AI-generated decoy code to camouflage malware and utilized AI voice cloning for sophisticated "Operation Overload" influence campaigns.

To counter these threats, Google is deploying an AI-driven defense:

- Big Sleep: An agent developed with Google Mind and Project Zero to find unknown vulnerabilities.

- CodeMender: An experimental tool using Gemini’s reasoning to automatically patch critical flaws.