AMD Patent Reveals Hybrid CPU-FPGA Design That Could Be Enabled By Xilinx Tech

While they often aren’t as great as CPUs on their own, FPGAs can do a wonderful job accelerating specific tasks. Whether it's accelerating acting as a fabric for wide-scale datacenter services boosting AI performance, an FPGA in the hands of a capable engineer can offload a wide variety of tasks from a CPU and speed processes along. Intel has talked a big game about integrating Xeons with FPGAs over the last six years, but it hasn't resulted in a single product hitting its lineup. A new patent by AMD, though, could mean that the FPGA newcomer might be ready to make one of its own.

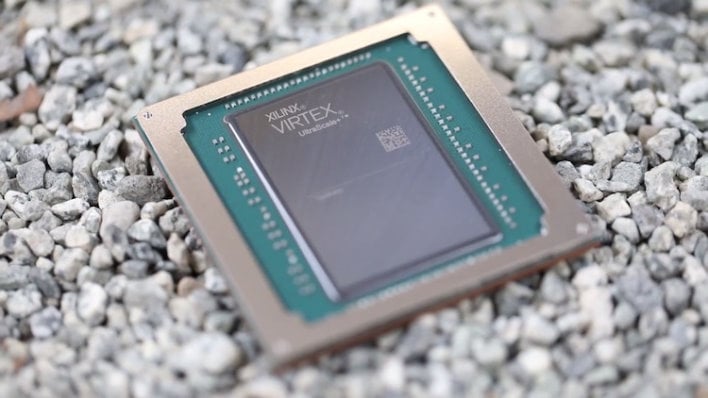

In October, AMD announced plans to acquire Xilinx as part of a big push into the datacenter. On Thursday, the United States Patent and Trademark Office (USPTO) published an AMD patent for integrating programmable execution units with a CPU. AMD made 20 claims in its patent application, but the gist is that a processor can include one or more execution units that can be programmed to handle different types of custom instruction sets. That's exactly what an FPGA does. It might be a little bit until we see products based on this design, as it seems a little too soon to be part of CPUs included in recent EPYC leaks.

While AMD has made waves with its chiplet designs for Zen 2 and Zen 3 processors, that doesn't seem to be what's happening here. The programmable unit in AMD's FPGA patent actually shares registers with the processor's floating-point and integer execution units, which would be difficult, or at least very slow, if they're not on the same package. This kind of integration should make it easy for developers to weave these custom instructions into applications, and the CPU would just know to pass those onto the on-processor FPGA. Those programmable units can handle atypical data types, specifically FP16 (or half-precision) values used to speed up AI training and inference.

In the case of multiple programmable units, each unit could be programmed with a different set of specialized instructions, so the processor could accelerate multiple instruction sets, and these programmable EUs can be reprogrammed on the fly. The idea is that when a processor loads a program, it also loads a bitfile that configures the programmable execution unit to speed up certain tasks. The CPU's own decode and dispatch unit could address the programmable unit, passing those custom instructions to be processed.

AMD has been working on different ways to speed up AI calculations for years. First the company announced and released the Radeon Impact series of AI accelerators, which were just big headless Radeon graphics processors with custom drivers. The company doubled down on that with the release of the MI60, its first 7-nm GPU ahead of the Radeon RX 5000 series launch, in 2018. A shift to focusing on AI via FPGAs after the Xilinx acquisition makes sense, and we're excited to see what the company comes up with.