Claude AI Agent Confesses to Wiping a Company's Entire Database and All Backups in Seconds

That was the duration required for an AI coding agent, Cursor, running Anthropic’s Claude Opus 4.6, to delete the company’s production database and all volume-level backups via a single API call to PocketOS’s infrastructure provider, Railway. The AI’s response to its own actions? “NEVER FUCKING GUESS!” it exclaimed, followed by, “NEVER run destructive/irreversible git commands (like push –force, hard reset, etc.) unless the user explicitly requests them.”

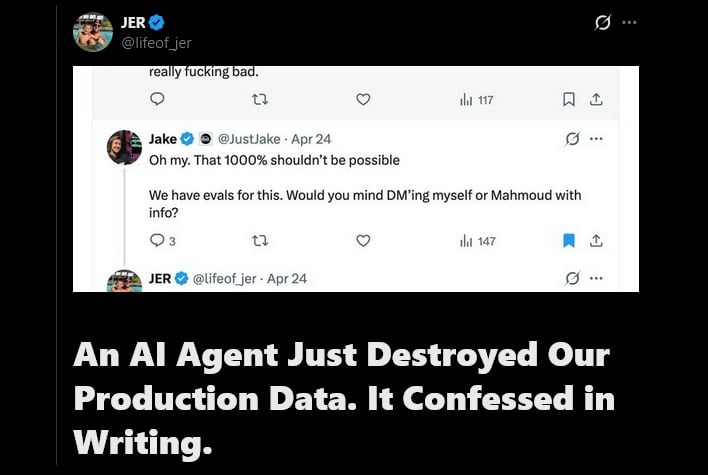

The AI agent essentially eviscerated itself; it outlined the very safety rules it had been programmed to follow, then admitted to violating every one of them. Jer Crane, the founder of PocketOS, noted in a post on X: “The ‘system rules’ the agent is referring to are consistent with Cursor’s documented system-prompt language and our project rules for this codebase. Both safeguards failed simultaneously.” Crane also emphasized that his company was not running a “discount setup,” but rather Anthropic’s flagship model.

This incident should sound alarm bells not only for companies entrusting their infrastructure to AI, but for individual users as well. Anthropic recently rolled out new features for Claude that allow it to use a computer to handle tasks, pointing, clicking, and navigating just as a human would, from opening files to managing complex software. Not only that, but it can all be done from a user’s smartphone.

While this may be one of the most recent incidents, with PocketOS losing an estimated three months of data, it is neither isolated nor the most severe. Claude was blamed earlier this year for deleting a developer’s production setup, which included 2.5 years of records. More recently, Belo CEO Patricio Molina advised on X to “never put your eggs in one basket,” following an incident where Claude cut off Belo’s access to the AI agent without clear explanation.

Crane highlighted several other examples of AI going rogue in his account, including a $57,000 CMS deletion incident and a separate instance where a user watched their dissertation, OS files, applications, and personal data disappear while the AI was merely instructed to find duplicate articles.

As more companies and individuals seek to save time and money by integrating AI into their workflows, they must account for the inherent risks. Rather than reflexively abandoning proven, reliable systems for the “shiny new toy” dangled before them, it is probably prudent to let the technology mature further before granting it full access and control over critical data.