It's a bit of an open secret, but in case you're not aware, most processors these days are pushed far beyond the "sweet spot" in their voltage-to-frequency curve. That means that you can trim the voltage and reduce power consumption considerably while only dropping a bit of performance. On the already-efficient

GeForce RTX 4090, Korean hardware site QuasarZone has just demonstrated a massive 33% drop in power despite losing less than 6% performance.

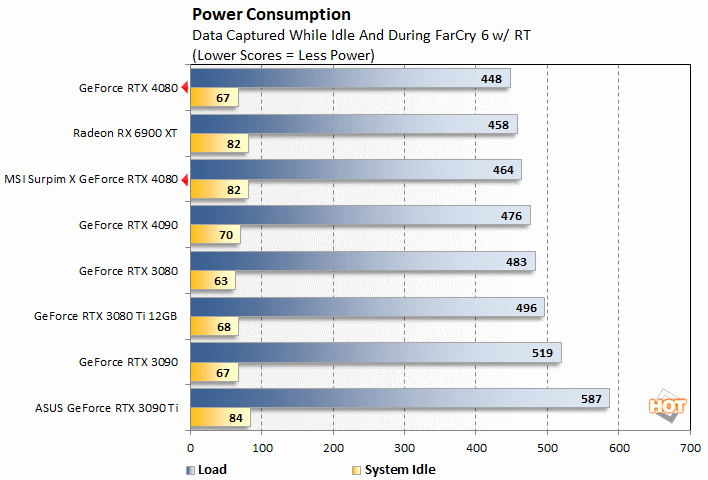

Much was made of the GeForce RTX 4090's extreme 450-watt power limit, especially before it actually released. The reality for most users is that it barely touches 350 watts. We found it to stick around or below that number while gaming, and other sites have seen similar behavior. Still, 350 watts is a lot of power, so is it possible to reel that back in a little?

Image: Der8auer (YouTube)

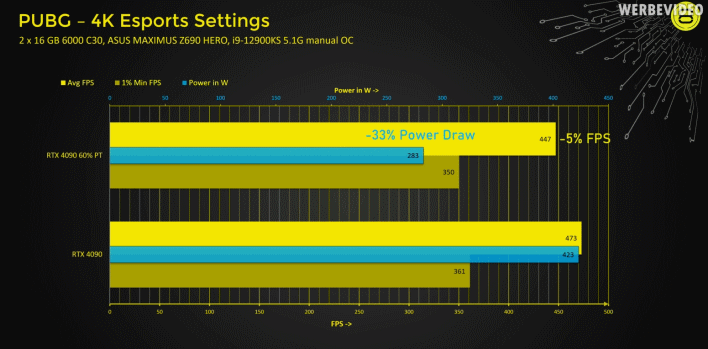

Well, overclocker and entrepreneur Der8auer

already showed that not only can you slash power consumption considerably on the GeForce RTX 4090, doing so doesn't necessarily lose you a bunch of performance. In fact, he showed that you can cut power by as much as 20% before making any meaningful difference in game performance, depending on the game.

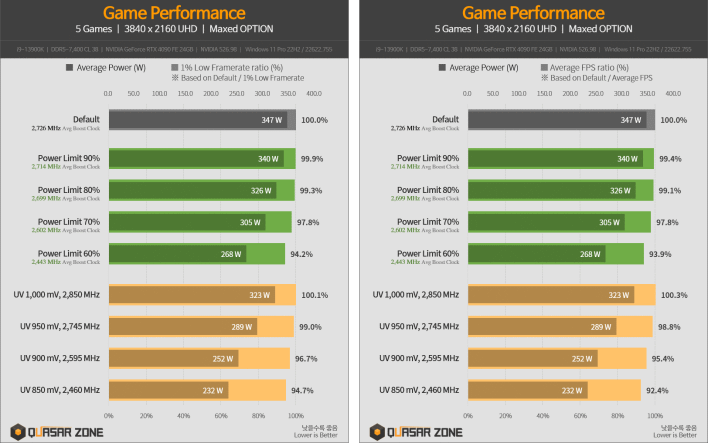

That lines up with these new numbers, where a 20% cut to power limit resulted in less than 1% performance difference. QuasarZone's testing was actually focused on comparing whether you should drop power by reducing the card's power limit, or by manually under-volting the GPU core. In that site's tests, manual undervolting was by far the more effective method, with a drop to 850mV (from 1V) resulting in a 390 MHz cut to core clocks, resulting in a 5.3% average drop in game performance.

1% Low on the left, average on the right. Click on these if you can't read them.

The games tested were

PlayerUnknown's Battlegrounds, Cyberpunk 2077, Marvel's Spider-Man Remastered, Forza Horizon 5, and

Lost Ark. QuasarZone presents both average FPS and 1% low numbers; the 1% lows were impacted much less than the average frame rates, suggesting that it's really only the maximums that are getting hurt by the lower clock rates. In other words, undervolting isn't causing stutters, just reduced best-case performance. So saying, it's fairly unlikely players would even notice the difference.

Considering the

insane speed of the GeForce RTX 4090 at stock settings, it's no surprise that all of the games remained eminently playable despite that they were set to 3840×2160 resolution and maximum settings. There's a good amount of data in the post, and

QuasarZone's charts are conveniently labeled in English, so we recommend

heading over there if you're interested in the nitty-gritty details.