PimEyes Is A Facial Recognition Search Engine That’s Disturbingly Accurate And Creepy

Facial recognition tools can be used for a variety of purposes. One of the intended purposes is for users to be able to perform a search of the internet for images of themselves. However, it could be used for more sinister purposes such as stalking someone. This is similar in nature to how Apple's AirTag has been misused. Clearview AI, a software tool often used by law enforcement, recently reached a settlement agreement with the ACLU over the company's usage of Illinois citizens' biometric data without their consent.

PimEyes offers a facial recognition tool that can be very effective and accurate. Anyone can try out the service by uploading an image of a face into its search engine. Once a photo has been uploaded, it only takes seconds for the search engine to perform its hunt of the vast internet and return its results. The free search only scratches the surface, as you will have to subscribe to a monthly package if you want a more in-depth search.

In a post by the New York Times, a dozen of its journalists uploaded images of themselves, with their consent, in order to test out the tool. Every search returned images of the journalists, along with some images that were not of them. Included in some of the incorrect matches were images from pornography sites, all of which were for females involved in the test. Needless to say, those results were disturbing for the women the software wrongly matched.

Cher Scarlett, a software engineer who led the #AppleToo movement before leaving the company, shared her experience with PimEyes in a very open and honest blog post. In the post, she stated that results from her search included images that were reportedly tagged with things such as "abuse," "torture," and "choke". The search brought back memories for Scarlett of an abusive past that included sexual trauma and attempts at suicide.

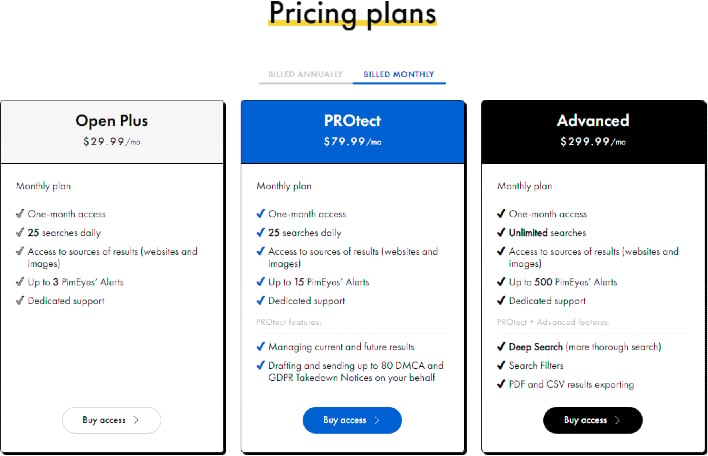

PimEyes offers three different subscription services that can be paid on a monthly, or yearly basis. The packages range in price from $30 to $300 a month. If you prefer PimEyes hides images from others, you will need to opt in to one of the higher tiers. But as Scarlett pointed out in her blog post, "Want to see scenes from an actual sex trafficking torture porn? Check out PimEyes. It'll only cost me $299.99 a month to stop you."

You can opt-out of having your images from being found using the service. However, due the complexities of the AI used some images may not get found and hidden from others, according to a blog post by PimEyes. If you find that some images are still viewable, you can submit a special form in order to remove them from search results.

The company has been receiving a lot of attention since reports have been made by various news outlets. This has seemingly only made PimEyes more popular, as it has seen an increase in usage. As with any technology of this nature, there will be those that will choose to abuse its power and use it for heinous reasons. Hopefully, PimEyes can institute more safety measures in the future that will help safeguard users and minimize false matches.

Top Image Credit: Pixabay