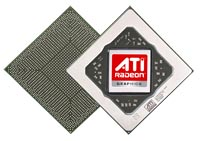

ATI Radeon HD 2900 XT - R600 Has Arrived

Take a look at the red-head standing over there. If you're a regular reader here at HotHardware, you know exactly who she is. That's ATI's adventurous and oh-so-curvaceous front-woman, Ruby. And she's holding that 'Perfect 10' sign for a very good reason. It's probably not the reason you're thinking of, however. For a digital personality, she's definitely pretty darn hot; maybe not a 10 in our book, but pretty darn close nonetheless. No, she's holding that sign not as proclamation of her hotness, but rather to give you all a hint as to what ATI has in store for the PC in the coming days, weeks, and months.

Take a look at the red-head standing over there. If you're a regular reader here at HotHardware, you know exactly who she is. That's ATI's adventurous and oh-so-curvaceous front-woman, Ruby. And she's holding that 'Perfect 10' sign for a very good reason. It's probably not the reason you're thinking of, however. For a digital personality, she's definitely pretty darn hot; maybe not a 10 in our book, but pretty darn close nonetheless. No, she's holding that sign not as proclamation of her hotness, but rather to give you all a hint as to what ATI has in store for the PC in the coming days, weeks, and months.

Today is the day many PC enthusiasts have been waiting for. And we say this with some hard data to reference. In January we ran a poll and nearly 40% of over 4,000 respondents said they were waiting to see ATI's next-gen R600 architecture before passing judgment on the already-released GeForce 8 Series. Despite the arrival of a clearly more powerful and significantly more feature-rich GPU architecture, an almost equal number of you decided to wait to see ATI's hand before betting on NVIDIA's G80. Today, we can finally tell you what ATI's been working on for the past few years and that 'Perfect 10' sign reveals part of the story.

ATI has chosen today, May 14, 2007 - my second wedding anniversary, incidentally - to reveal a line-up of 10 desktop and mobile GPUs, all derived from their R600 architecture. The line-up consists of sub-$100 entry level graphics cards to a $399 high-end part that's designed to do battle with NVIDIA's GeForce 8800 GTS. What about taking on the GeForce 8800 GTX and Ultra, you ask? Well, the new, AMD-owned ATI is moving in a somewhat different direction now, and at least currently, they don't plan to produce a low-volume, ultra-high performing part that only a fraction of the enthusiast crowd can afford. We know, some of you are crestfallen right now; we were too at first. But don't sweat it. The arrival of the R600 and its derivatives is a very good thing. We'll try to better explain on the pages ahead. For now, here are the specification of ATI's new flagship graphics card, officially named the Radeon HD 2900 XT.

|

|

|

|

700 million transistors on 80nm HS fabrication process

512-bit 8-channel GDDR3/4 memory interface Ring Bus Memory Controller

Unified Superscalar Shader Architecture

Full support for Microsoft DirectX 10.0

Dynamic Geometry Acceleration

Anti-aliasing features

CrossFire Multi-GPU Technology

|

Texture filtering features

ATI Avivo HD Video and Display Platform

PCI Express x16 bus interface OpenGL 2.0 support

|

We have a plethora of information related to today's launch available on our site that will help you get familiar with ATI's previous GPU architectures and their key features. The Radeon HD 2900 XT and its derivates in the Radeon HD 2000 family are totally new, but they do have a number of features in common with some members of the Radeon X1K family of products.

- NVIDIA GeForce 8800 GTX and GTS Launch

- Radeon X1950 Pro with Native CrossFire

- Radeon X1950XTX & X1900 XT 256MB Refresh

- AMD & ATI Merger: Questions and Answers

- ATI CrossFire Xpress 3200 Chipset Evaluation

- Radeon X1K Family Review

- ATI Crossfire Multi-GPU Technology Preview

If you haven't already done so, we recommend scanning through our CrossFire Multi-GPU technology preview, the Radeon X1950 Pro with Native CrossFire article, the X1K family review, and our NVIDIA GeForce 8800 GTX and GTS launch coverage. In those four pieces, we cover a large number of the features offered by the new Radeon HD 2000 series and explain many of benefits of DirectX 10. We recommended reading these articles because there is quite a bit of background information in them that'll lay the foundation for what we're going to showcase here today.