NVIDIA GeForce GTX 580: A New Flagship Emerges

Even before the GF100 GPU-based GeForce GTX 480 officially arrived, a myriad of news reports and rumors swirled claiming the cards would be hot, loud, and consume a lot of power, not to mention, be late to market. Unfortunately for NVIDIA, in the end, all of those things ended up being true to some degree. In all fairness, the GeForce GTX 480 did end up being the fastest single-GPU available, and things have only gotten better with recent driver releases, but it’s no secret that the GeForce GTX 480 wasn’t everything NVIDIA had hoped it would be.

Of course, NVIDIA knew that well before the first card ever hit a store shelf. And it turns out the company got to work on a revision of the GPU and card itself that would attempt to address the concerns with the GF100 and in turn, the GeForce GTX 480. The fruit of NVIDIA’s labor culminate in the product we’re going to be showing you here today, the GF110-based GeForce GTX 580.

Its name suggests the GeForce GTX 580 is a next-gen product, but make no mistake, the GF110 GPU powering the card is largely unchanged from the GF100 in terms of its features. However, refinements have been made to the design and manufacture of the chip, along with its cooling solution and PCB. The end product is a higher-performing, lower-power card that also happens to be much quieter than its predecessor.

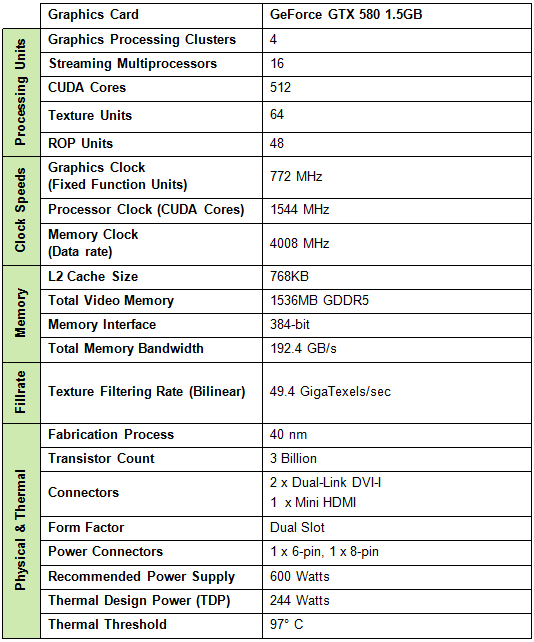

Take a look at the GeForce GTX 580’s specs below and then move on for the deep dive, complete with a full suite of tests in both single and dual-card configurations on the pages ahead...

NVIDIA's GeForce GTX 580 Exposed

|

Looking at the above features and specifications, it's obvious that the new GeForce GTX 580 is very similar, if not virtually identical, to the GeForce GTX 480, which was released a few months back. In fact, the GF100 GPU (GTX 480) and GF110 (GTX 580) share the very same architecture and feature set. As such, we'd strongly recommend checking out our coverage of the GeForce GTX 480 launch for the full scoop on what NVIDIA's high-end DirectX 11-class, high-end CPU can do, because we're not going to re-hash it all again here. With that said, the GF110 is a refinement of the GF100 design and some changes have been made to the ROPs and a few various other transistors in the chip.

Like the GF100, the GF110 is comprised of roughly 3 billion transistors and is manufactured using TSMC's 40nm process node. The GPU features 512 CUDA cores, 16 geometry units, 4 raster units, 64 texture units, 48 ROPs, and a 384-bit GDDR5 memory interface. Remember though, only 480 cores are exposed on the GeForce GTX 480--on the GF110 powering the GTX 580, all 512 CUDA cores are enabled. The reference GPU clock is 772MHz, up from 700MHz on the GTX 480. The shader clock on the 580 is also increased to 1544MHz (1401 on GTX 480) and the memory clock is similarly increased from 924MHz on the GTX 480 to 1001MHz on the GTX 580. The combination of additional CUDA cores and higher frequencies alone will result in increased performance over the GTX 480, but we're also told that some enhancements have been made to the GF100's ROPs as well, which result in better Z-Cull performance. Details of those changes weren't made readily available, however.

In addition to the aforementioned items, NVIDIA also tells us that they have been working closely with foundry partner TSMC and have modified the transistors used in some parts of the chip. Whereas the GF100 used TSMC's fastest switching, and also "leakiest", transistors throughout, the GF110 uses a combination of high-speed and lower-speed transistors, to somewhat reduce current leakage in the chip.

Along with the changes at the chip level, NVIDIA has also made some tweaks to the cooler design, the PCB and the power delivery circuitry on the GeForce GTX 580. Unlike the GeForce GTX 480 which used a huge GPU cooler with multiple heat-pipes, the GeForce GTX 580 employs a newly designed Vapor Chamber cooler. Vapor Chamber coolers are not new, but the custom Vapor Chamber used on the GTX 580 is better equipped to handle the intense heat output and temperature fluctuations of a high-end GPU. When used in conjunction with a newly designed adaptive fan controller and fan, the end-game is a cooling solution on the GTX 580 that is significantly quieter than the GTX 480. We should also point out that the fan shroud on the GeForce GTX 580 has been optimized as well and now features a sharp drop-off on one end to aid in better air-flow into the fan, when two cards are butted up close together running in an SLI configuration.

The GeForce GTX 580 also sports a new hardware monitoring feature that monitors current on each of the card's 12v rails and dynamically adjusts voltage to keep total power in check. Currently, this feature works in conjunction with the card's drivers and detects only two applications, Furmark and OCCT. These two applications employ workloads that are known to push the power consumption of some graphics cards so high, that they'll operate outside of their thermal and power envelopes. To protect the card in these situations, power to the card can be managed using this new hardware monitoring feature.