NVIDIA Unleashes Quadro 6000 and 5000 Series GPUs

It's true that high-end workstation graphics cards may be based on the same core architectures as gaming-targeted graphics cards, however, their purposes are very different. While they both accomplish the same task, processing commands and rendering images on-screen, workstation cards endure a more strenuous existence than their gaming counterparts. Workstation cards are used to solve complex, mission-critical problems, like helping engineers design and build cars; helping architects to plan and construct buildings, and even help oil and gas companies to provide more effective means of production and transportation.

Featuring 352 CUDA parallel processing cores and 2.5GB of GDDR5 memory, the Quadro 5000 should be a strong performer. It features a smaller version of the cooling system found on the GTX 470 / 465 gaming models, underneath the silver and black plastic shroud featuring NVIDIA's Quadro emblem. The card offers four outputs: two DisplayPorts, one dual-link DVI, and one 3D stereoscopic connector. Both DisplayPort and dual-link DVI support up to 2560 x 1600 (30" monitor) resolution. Any combination of monitors can be used, but only two outputs can be active at one time. For this reason, this card only supports two monitors despite having three output connectors.

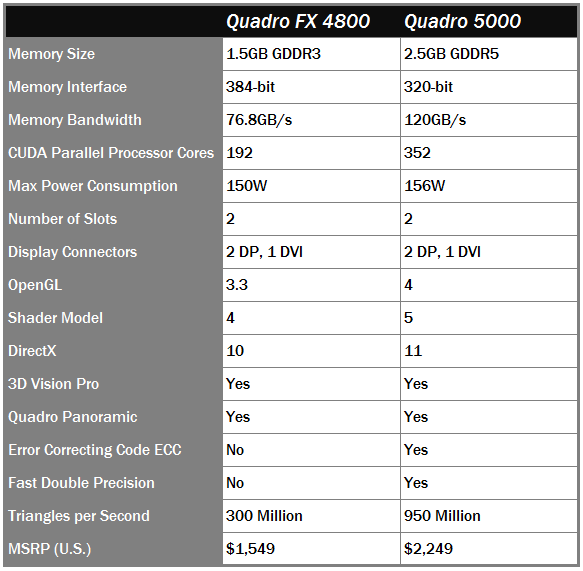

Quadro FX 4800 vs Quadro 5000 Comparison Chart

We put together this chart for you in order to quickly show the specs and features of the Quadro 5000 compared to the card its replacing, the FX 4800. The 5000 offers 60 additional CUDA cores, and provides 2.5GB of GDDR5 memory along with a huge upgrade in memory bandwidth.

The Quadro 6000 is simply a powerhouse. It offers users 448 CUDA processing cores with an incredible 6GB of GDDR5 memory onboard. The silver and black shroud is identical to the one found on the Quadro 5000, along with the same video outputs on the rear bracket.

Quadro FX 5800 vs Quadro 6000 Comparison Chart

Looking over the specs, the Fermi-based Quadro 6000 is a massive improvement over the FX 5800. With an extra 2GB GDDR5 memory and 208 more CUDA processor cores, the Quadro 6000 packs a serious punch. Just don't expect it to come cheap, as top shelf performance is usually chaperoned by extravagant pricing, which is the case here.

Physically, the Quadro 6000 and 5000 look almost identical. The only differences can be seen in the image above. Besides the naming labels, the Quadro 6000 sports two PCIe power connections, one 6-pin and one 8-pin, while the 5000 requires only one 6-pin power cable. Note that the top-of-the line Quadro 6000 can run off either two 6-pin connectors or a single 8-pin connector. We also tested the card with a 6-pin and an 8-pin power connector installed with no issues.